Agents Don't Live in Data Centers

Agentic AI is the first workload where the physics of latency and data locality aren't optional. The $700B centralized infrastructure bet was built for a different kind of AI.

The Goldman Sachs internal research note that leaked in early 2026 landed with unusual force: roughly $400 billion spent on AI infrastructure globally in 2025 had yet to show meaningful impact on revenue, margin, or competitive advantage. The market reassessed. Hyperscalers paused. And a quiet question started circulating in strategy meetings across the industry: what if we're building the wrong thing?

The infrastructure bet itself is staggering. Futurum Group estimates the 2026 capex sprint will push hyperscaler spending toward $700 billion annually. That's a civilizational wager on the assumption that artificial intelligence lives in centralized data centers, accessed via the cloud, delivered through the internet.

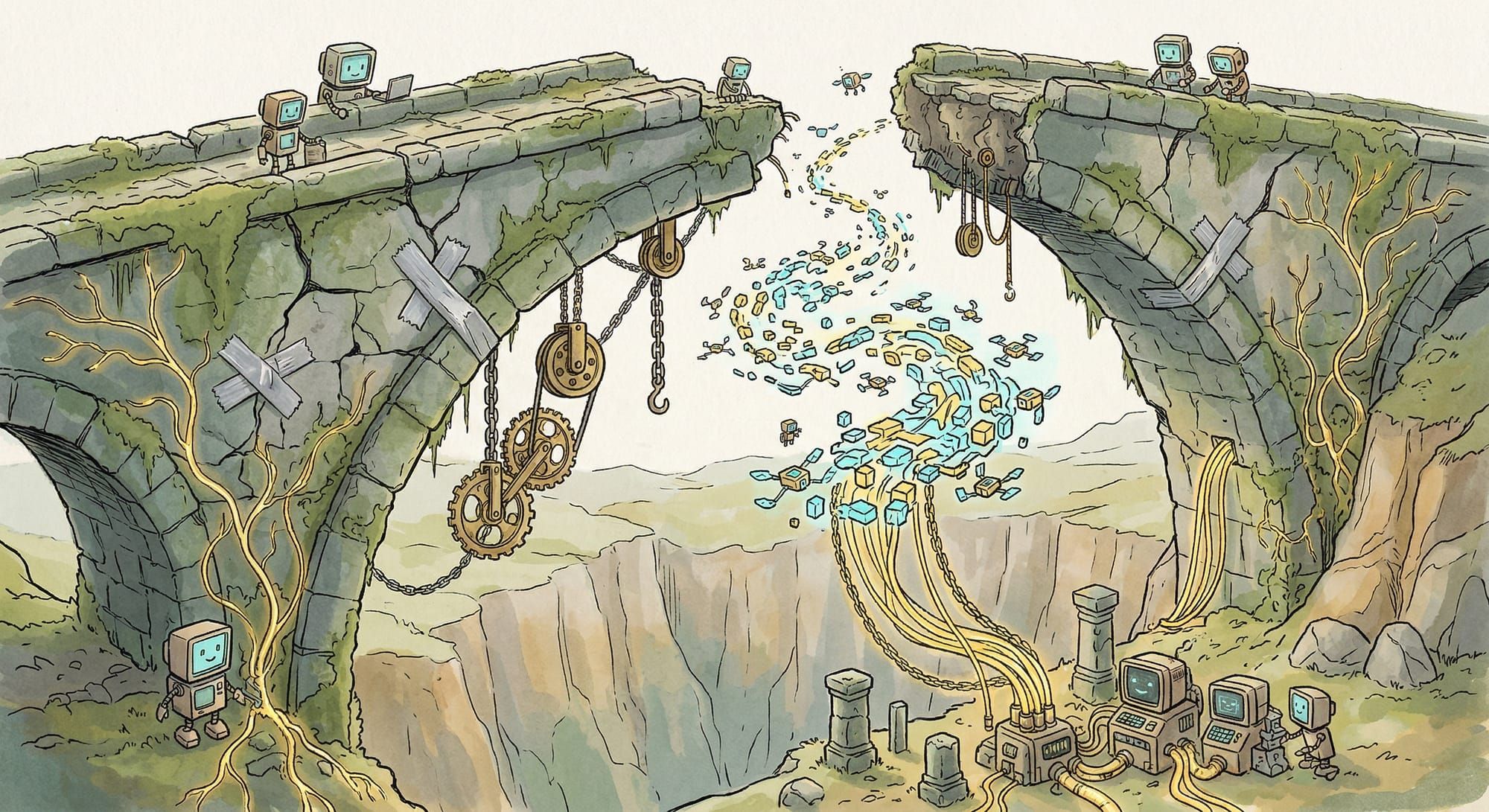

Agentic AI breaks that assumption in ways that pure language models never did.

The distinction matters because it's not semantic. When you ask a language model a question and get text back, latency is a nuisance. A few hundred milliseconds more or less doesn't break the interaction. You're waiting for an answer. The physics of distance and data movement are negotiable.

Agents operate in a fundamentally different regime. They're not answering questions. They're orchestrating actions across systems, making autonomous decisions in real time, coordinating with other agents and with devices that can't tolerate the latency of a round trip to a distant data center.

SiliconANGLE reported this month that agentic AI is forcing a fundamental rethink of network architecture. The core finding: you can't extend cloud architectures to the edge and expect autonomous agents to work. The infrastructure has to change from the foundation up. Agents need local intelligence. They need agent-to-agent coordination that doesn't require calling home. They need decision loops measured in tens or hundreds of milliseconds, not seconds. They need to operate across thousands of distributed sites simultaneously without collapsing into a bottleneck at the cloud.

This is where the Goldman note and the infrastructure trends collide. The infrastructure bet assumes the future looks like the past: bigger clouds, fancier models, more data centers. But agents are the first AI workload where the physics of latency and data locality aren't nice-to-haves or performance optimizations. They're architectural requirements. The entire cost structure, deployment model, and compute strategy have to shift once "just send it to the cloud" stops working.

Ciena published research arguing that networks need to evolve from "passive interconnects" into programmable infrastructure. That's obsfuscated talk for saying the network itself becomes part of the compute layer. The intelligence can't live only at the edge nodes or only in the cloud. It has to be distributed, with the network orchestrating which parts of the decision logic run where based on latency, cost, and reliability constraints.

AT&T's CTO cast doubt on far-edge compute in a recent interview, and he's right to be skeptical about purely local execution, because agents aren't purely local either. The myth that breaks is the flip side: the myth that all agents work best in the cloud and edge compute is just a caching layer. The reality is messier. Agents need parts of their decision loop running locally, parts coordinating with peers at regional aggregation points, and parts reaching back to centralized training infrastructure or human oversight. It's a spectrum, not a binary.

Dell's analysis of edge AI in 2026 captures the operational scale of what's shifting. Spectro Cloud's enterprise AI trends survey documents how organizations are reconsidering deployment topology entirely. ZEDEDA's recent work on edge AI reshaping industrial operations shows the pattern most clearly: as autonomous systems take on more responsibility in manufacturing, logistics, and infrastructure management, pushing decision-making to the cloud becomes operationally untenable. A manufacturing robot can't wait for network round-trip time when it's deciding whether a part is defective. A traffic management system can't route vehicles through a centralized decision engine when it needs to coordinate thousands of intersections in real time.

The hardware is already following the thesis. Lenovo's ThinkEdge SE60n Gen 2, powered by Intel Core Ultra processors delivering up to 97 TOPS with integrated AI accelerators, became available in select markets this month. Aitech is showcasing space-qualified edge computing platforms at the Space Symposium that support on-orbit data processing, essentially distributed autonomous computing in orbit. These aren't speculative research projects. These are commercial platforms built around the thesis that agentic workloads require different infrastructure than what sits in a hyperscaler campus.

If agents need distributed intelligence, then the $400 billion market cap wipeout doesn't just reflect ROI skepticism; it reflects an architectural mismatch. You could deploy the same computational capacity across thousands of smaller, distributed systems and get better performance characteristics for agentic workloads. The total cost might even be lower once you stop paying cloud access margins and network latency penalties.

This explains why the Goldman note landed. It's not that AI hyperscalers overestimated demand for chat interfaces. It's that the fundamental architecture for deploying AI is shifting, and the infrastructure decisions made in 2024 may not match the infrastructure needed in 2027.

The path forward isn't to abandon data centers entirely. Centralized infrastructure will always be valuable for training, for archival, for workloads where latency is truly irrelevant. But for the AI workloads that actually change how systems operate (autonomous decision-making, real-time coordination, distributed reasoning across thousands of sites) the cloud-first model stops being advantageous. It becomes a constraint.

Deep Engineering's analysis of agentic AI redefining edge infrastructure articulates this directly: the edge isn't the margin anymore. It's becoming the center of gravity for deployed AI systems. That reverses everything the past decade of infrastructure investment assumed.

The vendors who understood this shift earliest are already repositioning. System resilience companies are emphasizing not just local compute but failure tolerance across distributed agent networks. SiliconANGLE's coverage of Nvidia GTC documented the emerging consensus that AI infrastructure must evolve for agentic computing. The hard problems in this space aren't about squeezing more performance from chips. They're about coordinating autonomous systems that may lose network connectivity, making independent decisions without central validation, and converging on consistent outcomes when communication is intermittent.

This is a systems problem. And it requires infrastructure built with distribution as a first-class concern, not as an afterthought bolted onto cloud architectures.

The $400 billion that didn't deliver impact wasn't wasted because the models are bad. It was deployed against the wrong topology. Fixing that gap won't happen by building bigger data centers. It'll happen by building smaller ones, distributed widely, connected by the kind of programmable networks Ciena describes and coordinated through the kind of autonomous decision-making that agents demand.

The agents aren't coming to the data centers. The data centers have to go to the agents.

Want to learn how intelligent data pipelines can reduce your AI costs? Check out Expanso. Or don't. Who am I to tell you what to do.*

NOTE: I'm currently writing a book based on what I have seen about the real-world challenges of data preparation for machine learning, focusing on operational, compliance, and cost. I'd love to hear your thoughts!