Six Million Cell Towers Walk Into a Data Center

At MWC Barcelona, NVIDIA and a 130-company coalition just announced plans to turn the global telecom network into a distributed AI compute grid. Cell towers running AI inference alongside 5G traffic. The industry is spending $700 billion building new centralized data centers while ignoring the large

There are approximately 6.4 million cell towers worldwide. Each one has power. Cooling. Backhaul connectivity. Permits that took years to obtain. Physical security. And right now, roughly 70% of their compute capacity sits idle.

This week at Mobile World Congress in Barcelona, NVIDIA announced a coalition with BT Group, Deutsche Telekom, Ericsson, Nokia, T-Mobile, SoftBank, SK Telecom, Cisco, and over 130 companies to build 6G networks on what they're calling "AI-native platforms." The core technology is AI-RAN: radio access networks where the same GPU that processes your cellular signal also runs AI inference workloads during idle cycles. T-Mobile demonstrated it running live in the field. SoftBank achieved 16-layer massive MIMO on a fully software-defined 5G stack. SynaXG hit 36 Gbps throughput with sub-10 millisecond latency running 4G, 5G, and AI workloads simultaneously on a single server.

Jensen Huang's framing was characteristically bold: "AI is redefining computing and driving the largest infrastructure buildout in human history, and telecommunications is next."

I want to focus on five words in that sentence: "the largest infrastructure buildout." Because the interesting thing about the telecom network isn't that it needs to be built. It's that it's already there.

The Infrastructure That Doesn't Need a Permit

While the AI industry spends $700 billion on new centralized data centers and communities across 11 states file moratorium bills to block construction, the telecom network represents something remarkable: a globally distributed compute infrastructure that already exists, already has community acceptance, and already has the power and connectivity to run AI workloads.

Consider what the numbers actually look like. The global telecom network includes millions of cell sites, hundreds of thousands of central offices, metro aggregation points, and edge facilities. In the United States alone, there are over 400,000 cell towers and small cells. Every single one of them already went through the permitting process. Already got environmental review. Already has a power connection and a fiber backhaul. Nobody in Denver is holding standing-room-only community meetings to protest cell towers that have been there for a decade.

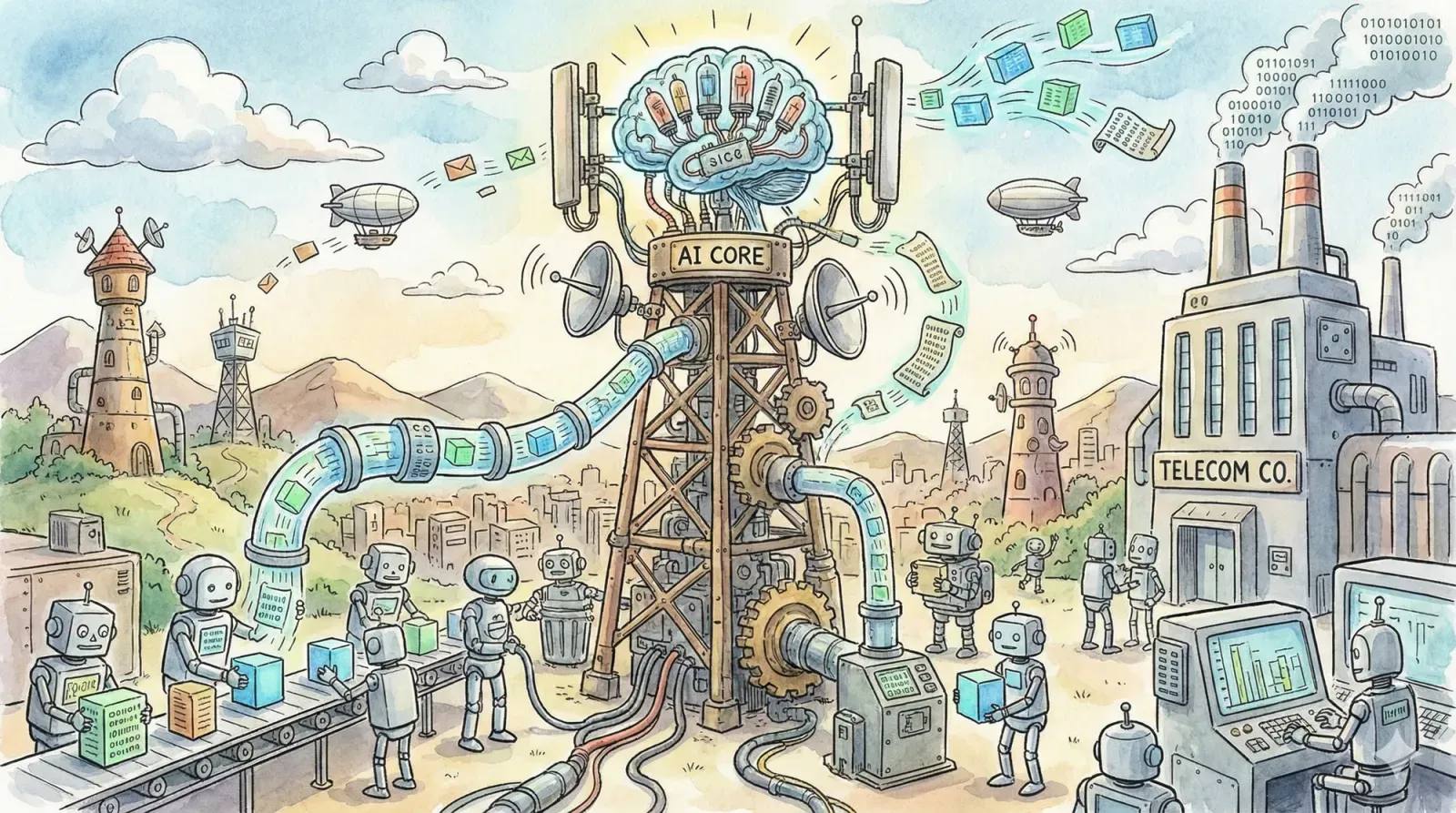

NVIDIA's AI-RAN pitch recognizes this. Their Aerial RAN Computer turns these existing sites into what they call an "AI Grid," a massively distributed network of compute nodes that can process AI inference workloads wherever they make the most sense. The base station that handles your phone call at 8 AM runs inference for a nearby hospital's diagnostic system at 2 AM. Same hardware. Same power. Same location. No new construction required.

McKinsey estimates the global GPU-as-a-Service market for telcos could reach $35 to $70 billion annually by 2030. That's not hypothetical future infrastructure. That's revenue from infrastructure that operators have already depreciated.

What MWC Actually Demonstrated

The MWC announcements weren't just slides and press releases. The field results are worth paying attention to.

T-Mobile ran concurrent AI and RAN processing at their AI-RAN Innovation Center in Seattle, using Nokia's CUDA-accelerated RAN software on NVIDIA hardware. Commercial devices streamed video, ran generative AI applications, and processed AI-powered video captioning, all while the same infrastructure handled live 5G traffic on the 3.7 GHz band. SoftBank's field trial in Japan demonstrated three times the spectral efficiency and three times the throughput of conventional configurations using the same spectrum allocation. Indonesia's Indosat Ooredoo Hutchison made Southeast Asia's first AI-powered 5G call, including real-time remote control of a robotic dog over the live network.

And then there's SynaXG's benchmark: 4G, 5G sub-6GHz, 5G millimeter wave, and agentic AI workloads, all on a single NVIDIA GH200 server. Twenty component carriers activated simultaneously. 36 Gbps throughput at under 10 milliseconds latency. That's the world's first AI-RAN implementation on millimeter wave bands.

This matters because the perennial knock on edge computing has been that it's not real, that the performance can't match centralized data centers, that the workloads don't justify the complexity. These field trials are starting to close that gap with actual measurements rather than projections.

The Blind Spot

Now here's what's strange. NVIDIA is simultaneously making two arguments that contradict each other.

Argument one, articulated through AI-RAN and the 6G coalition: AI inference should be distributed. Put compute at the edge of the network. Use existing infrastructure. Process data where it's generated. Turn every cell site into an AI node. The network itself is the computer.

Argument two, articulated through their data center GPU business (which generated $62.3 billion last quarter alone): AI requires massive centralized infrastructure. Build bigger data centers. Buy more GPUs. Concentrate compute in hyperscale facilities.

For some reason, this went mostly unconnected at MWC. The same company telling telecoms to distribute AI inference is telling hyperscalers to centralize it. The same Jensen Huang who says telecommunications infrastructure is the next AI platform also says $700 billion in centralized data center capex is "just the start."

The charitable interpretation is that training stays centralized while inference distributes. There's truth to that. But the inference workloads are where the overwhelming majority of production AI compute goes, and that share is growing. If NVIDIA's own AI-RAN thesis is correct, that inference belongs at the edge, then the demand curve for centralized data center GPUs looks fundamentally different than what the $700 billion capex number assumes.

The CUDA-Shaped Catch

There's another tension in the MWC announcements that deserves scrutiny. The 6G coalition pitched itself as a commitment to "open, secure, and trustworthy platforms." The press release emphasized interoperability, supply-chain resilience, and open standards. NVIDIA even open-sourced their Aerial CUDA-accelerated RAN libraries and joined the OCUDU Foundation at the Linux Foundation.

But look at the implementation. 26 of the 33 AI-RAN Alliance demonstrations at MWC were built on NVIDIA AI Aerial. The Aerial RAN Computer runs on NVIDIA Grace CPUs and NVIDIA GPUs. The software stack is CUDA-accelerated. The networking uses NVIDIA BlueField DPUs and NVIDIA Spectrum switches. The field trials with T-Mobile, SoftBank, and IOH all run on NVIDIA platforms.

"Open" is doing a lot of work in that press release.

The telecom industry spent the last two decades learning painful lessons about vendor lock-in with Ericsson, Nokia, and Huawei. The Open RAN movement exists specifically because operators got tired of being locked into proprietary radio stacks. And now, at the exact moment the industry is converging on the next generation of network architecture, 80% of the demonstrations run on a single vendor's proprietary compute platform.

Ericsson and Intel announced their own 6G AI-RAN collaboration at MWC, positioning Intel Xeon processors as the compute backbone for converged RAN and edge AI. That's a start. But the ecosystem needs to be genuinely multi-vendor if "open" is going to mean anything beyond a marketing term. Distributing the geography while centralizing the vendor dependency doesn't solve the structural problem. It just moves it.

The Question That Matters

Set aside NVIDIA's business model contradictions and the vendor lock-in risk for a moment. The architecturally interesting question is simpler: if distributing AI inference across the telecom network makes sense, why doesn't distributing AI inference across every network make sense?

The logic NVIDIA uses to justify AI-RAN applies well beyond cell towers. The hospital that generates imaging data has servers. The factory with IoT sensors has compute infrastructure. The retail chain has edge devices in every store. The autonomous vehicle has onboard processing. None of these workloads benefit from a round trip to a centralized data center hundreds of miles away. All of them generate data locally, need inference locally, and could process locally if the software stack supported it.

Gartner predicts that by 2027, organizations will use task-specific small language models three times more often than general-purpose LLMs. By 2028, 30% of generative AI workloads are projected to run on-premises or on-device. The AI-RAN field trials showed that meaningful inference can happen on a single server sharing resources with an entirely different primary workload. If a cell tower can run AI while simultaneously processing 5G traffic, a hospital server can certainly run AI while processing patient records.

The cell tower network is compelling because it's the largest, most distributed, most connected compute infrastructure humans have ever built. But it's not unique. The principle generalizes. Compute belongs where the data is, where the users are, where the latency requirements demand it. The cell tower just happens to be the most obvious proof point.

What MWC 2026 Actually Proved

The most interesting thing about Mobile World Congress this year isn't NVIDIA's 6G coalition or the AI-RAN field trials or the 130-company pledge. It's that the largest GPU company on Earth stood on stage and made the case for distributed inference, and the rest of the industry didn't notice.

Sixty-two billion dollars of NVIDIA's quarterly revenue comes from selling GPUs to centralized data centers. Their telecom division is a fraction of that. But the telecom team just demonstrated that AI workloads can run on existing distributed infrastructure, at carrier-grade reliability, sharing compute resources with other workloads, at the edge of the network, without building anything new.

If that's true for telecoms, it's true for everything.

The $700 billion question isn't whether AI needs compute. It does. The question is whether that compute needs to be concentrated in new centralized facilities that communities are already refusing to permit, or whether it can be distributed across the infrastructure that already exists. NVIDIA's own engineers at MWC just showed you the answer. Whether NVIDIA's business model catches up to NVIDIA's engineering is a different question entirely.

Six million cell towers walked into a data center, and the punchline is: they didn't need to.

Want to learn how intelligent data pipelines can reduce your AI costs? Check out Expanso. Or don't. Who am I to tell you what to do.*

NOTE: I'm currently writing a book based on what I have seen about the real-world challenges of data preparation for machine learning, focusing on operational, compliance, and cost. I'd love to hear your thoughts!