NVIDIA Just Showed You the $249 Future. Nobody Built the Layer That Makes It Work.

Well... not NOBODY :)

On Monday, Jensen Huang walked onstage at GTC 2026 and delivered what might be the most consequential keynote in NVIDIA's history. $1 trillion in purchase orders for Blackwell and Vera Rubin through 2027. A walking, talking Olaf robot trained in Omniverse and powered by Jetson. IGX Thor now generally available for factories and hospitals, already deployed by Caterpillar, Medtronic, Hitachi Rail, and KION. Autonomous vehicles with BYD, Hyundai, Nissan, and Geely. An entirely new class of agentic infrastructure with NemoClaw layering security on top of OpenClaw. A new metric for AI ROI: tokens per watt.

Jensen called it the "inflection of inference." AI is no longer a thing that chats. It reasons, it acts, and it's moving into the physical world at a pace that should make every infrastructure engineer get lots of CRAZY IDEAS.

Almost all of the commentary since has focused on the chips, the robots, and the trillion-dollar demand signal. The part that actually determines whether any of this works in production got about thirty seconds of stage time.

The Hardware Is Remarkably Cheap Now

NVIDIA's bet is clear, and the numbers back it up. The Jetson Orin Nano Super is $249, delivers 67 TOPS of AI performance (a 1.7x improvement over its predecessor at half the price), and runs LLMs, vision transformers, and visual language models out of the box. IGX Thor brings Blackwell-architecture compute to the industrial and medical edge with functional safety baked in. Johnson & Johnson is building surgical robotics on it. Caterpillar is running an AI cabin assistant on a machine that weighs eight tons and fits inside a shipping container.

The hardware is there. The models are there. NemoClaw gives you an agentic framework that runs on everything from Jetson to DGX. JetPack handles the OS and drivers. You can buy a device for the price of a decent pair of running shoes, plug it in, and you've got a full AI computer.

So why does it still take weeks to get a real workload running on one?

The Part That Jensen Didn't Talk About

You've got your $249 Jetson sitting in a factory, or a warehouse, or mounted on a pole above a parking lot. You've got a camera feeding it frames. You've got a model ready to run inference. Great. Now what?

That camera is shooting 4K video. Your model doesn't need 4K. It needs downsampled, cleaned, color-corrected frames at a specific resolution that maximizes inference speed without losing the signal you care about. A single 4K camera generates roughly 8 to 15 Mbps of continuous data depending on compression and frame rate, and that's per camera. Scale that to a hundred cameras across twenty sites, and you're looking at terabytes per day that need processing before you've extracted a single useful insight.

Who handles the data shaping before it hits the model? When you need to swap out the model for a better one, how does that update roll out across 500 sites? When inference results need to land in your Snowflake instance or your Databricks lakehouse, how does that happen? When something breaks at site 247, how do you even know?

These are not hypothetical questions. These are the questions that turn a $249 dev kit into a six-month integration project.

Destinations Aren't Starting Points

I keep hearing the same objection: "Doesn't Snowflake do this? Doesn't Databricks handle data pipelines?" And the answer is yes, absolutely, those platforms are critical. They're where your data needs to end up. But they assume your data has already arrived, clean and structured, ready for analysis.

That assumption ignores basic physics. Data at the edge is raw, noisy, high-volume, and expensive to move. The processing needs to happen before the data leaves the device. Filter it, transform it, run inference locally, send only what matters. That's not what Snowflake does, and Databricks doesn't pretend otherwise. Those are destinations. What's missing is the pipeline that runs at the source, on the device itself.

Jensen told The Decoder this week that NVIDIA wants to "swap robotics' data problem for a compute problem." That framing is revealing. You can't swap the data problem out. You have to solve it. You can throw all the compute in the world at a sensor feed, but if the data arriving at the model is noisy, oversized, or shaped wrong, the inference is garbage. Compute doesn't fix data preparation; it accelerates whatever the data pipeline gives it, good or bad.

One Frame Every Six Milliseconds

We built a proof of concept that makes this concrete. Two off-the-shelf cameras from Costco (seriously, Costco) connected to a single Jetson device. The job: count cardboard boxes on a conveyor belt.

The pipeline takes the raw camera feed, downsamples it from 4K, reduces the color channels, and reshapes the frames for a fine-tuned YOLO model that only knows about cardboard boxes. Because the model is purpose-built and the data is properly shaped before inference, it processes one frame every six milliseconds. That's not a typo. Six milliseconds.

The results stream directly into the customer's backend. The entire thing runs on 50MB of RAM and less than 1% CPU on the edge device. The pipeline didn't just make inference possible. It made inference fast, cheap, and operationally manageable.

None of the magic is in the hardware. None of it is in the model. The magic is in the data pipeline that connects them.

A Trillion Devices, a Trillion Pipelines

Jensen's vision is explicit: a trillion devices running AI in the physical world. Robots in warehouses. Cameras on factory floors. Sensors in hospitals. Jetson modules in autonomous vehicles. IGX Thor systems running real-time surgical assistance for J&J and Karl Storz. The world's four largest industrial robot manufacturers (FANUC, ABB, YASKAWA, KUKA) are integrating NVIDIA platforms into their commissioning workflows.

Every single one of those devices needs a way to ingest data, shape it for the specific model it's running, execute inference, route the output to the right destination, and do all of that while someone can observe, update, and manage it remotely. That's a data pipeline. And it needs to be deployed in minutes, not weeks, because the economics of edge AI collapse if every deployment is a bespoke engineering project.

Caterpillar isn't going to run AI cabin assistants without a way to manage what data feeds those models. Medtronic isn't going to deploy surgical AI without governance, PII masking, and audit trails on the data flowing through those systems. T-Mobile and Nokia are already working with NVIDIA to transform 5G networks into distributed edge AI infrastructure. That's infrastructure that needs orchestration, not just compute.

The Unsexy Layer

Data pipelines are like oxygen: nobody walks out of a keynote excited about them, but nothing works without them. Jensen showed you the chip, the model, the robot, the car, and the trillion-dollar market. Everything except the connective tissue that makes it all function at scale.

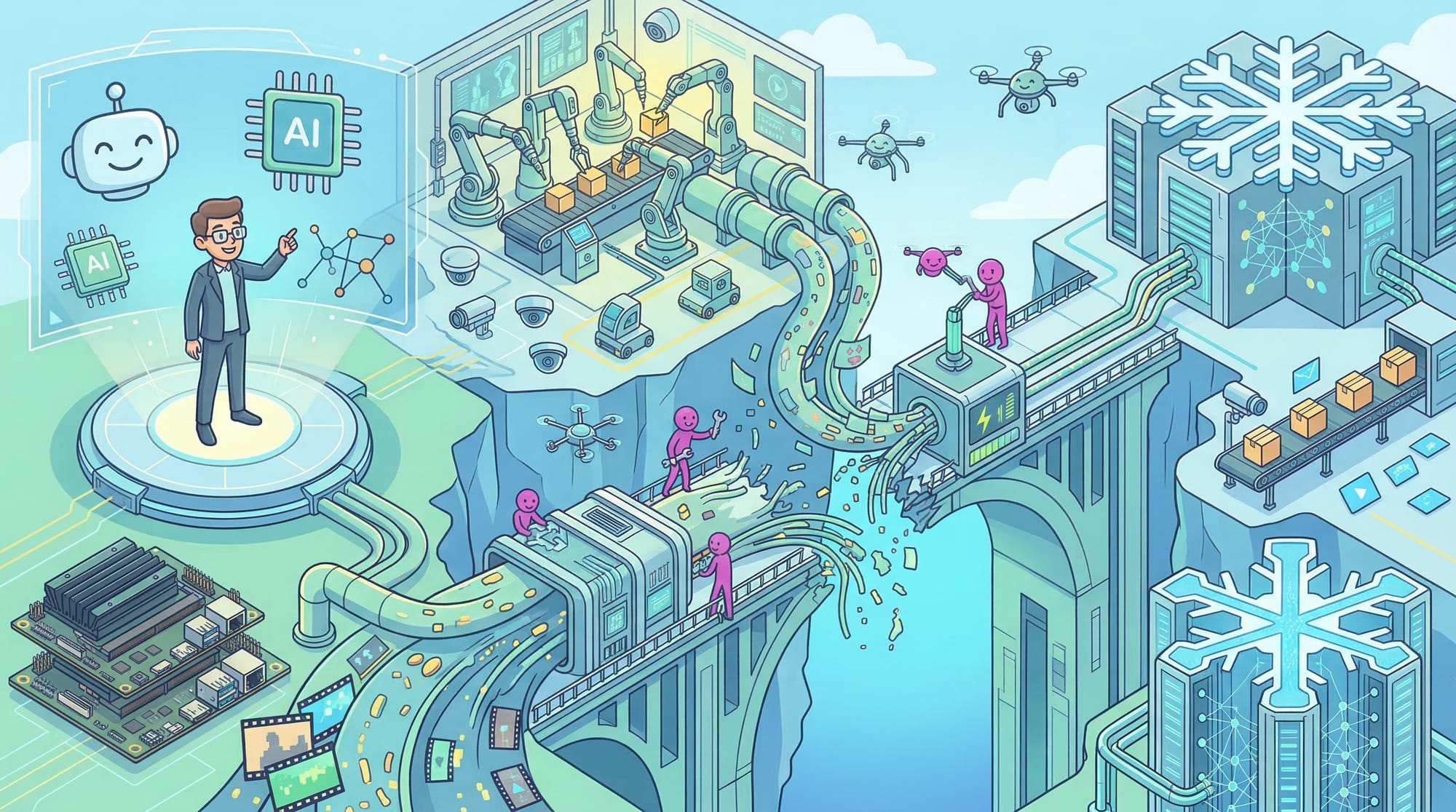

The trillion-device future he described requires a trillion-pipeline infrastructure underneath it, and right now, almost nobody is building that. The gap isn't hardware. The gap isn't models. The gap is the 200 feet of data transformation between a camera and a GPU.

Jensen showed you the future. The question nobody's asking is who builds the plumbing.

Want to learn how intelligent data pipelines can reduce your AI costs? Check out Expanso. Or don't. Who am I to tell you what to do.*

NOTE: I'm currently writing a book based on what I have seen about the real-world challenges of data preparation for machine learning, focusing on operational, compliance, and cost. I'd love to hear your thoughts!