The Great Refusal

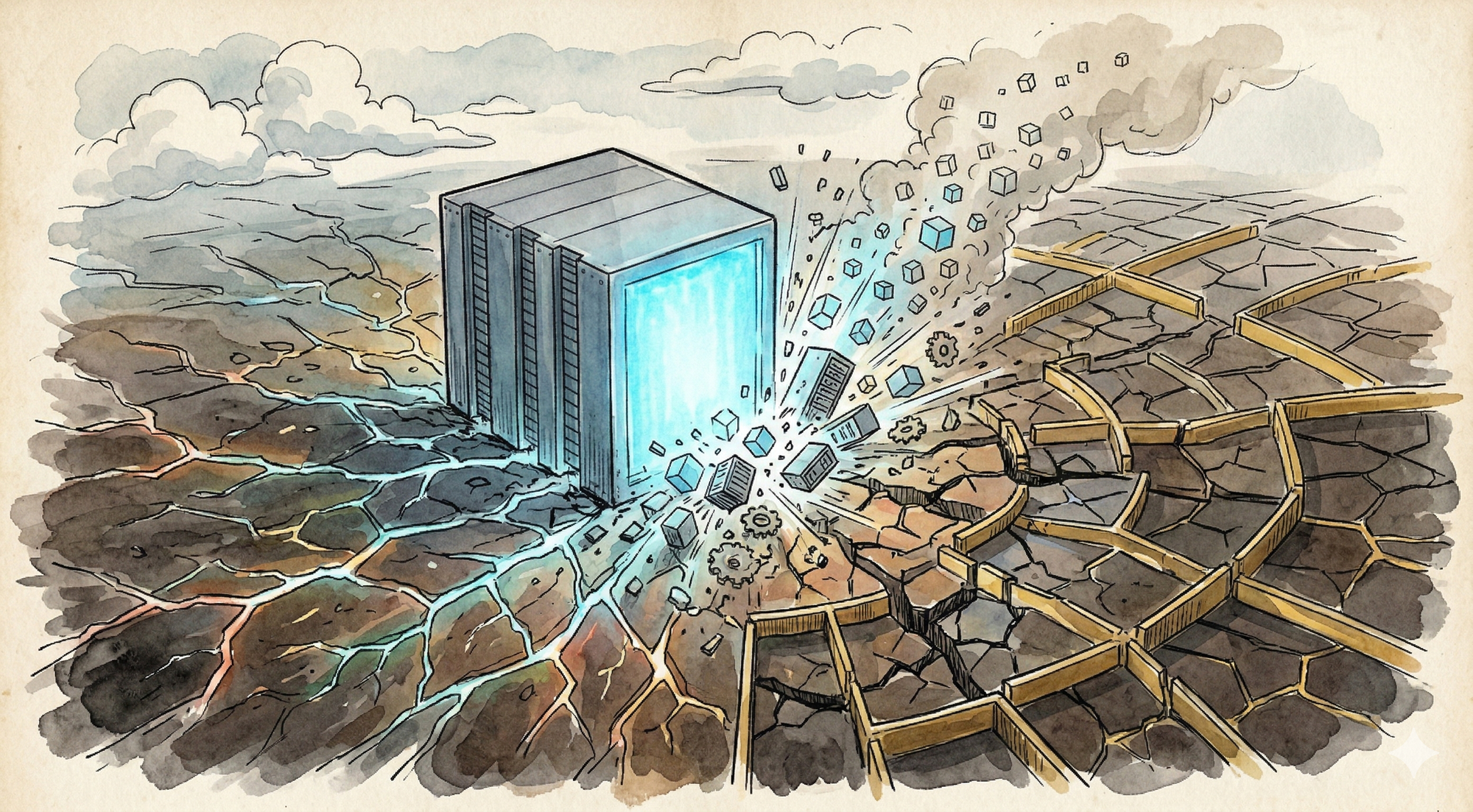

In six weeks, 300+ data center bills have been filed across 30+ states. Six states have moratorium proposals. Local governments in 14 states have already hit pause. The industry frames this as a PR problem. It is an architecture problem.

In the first six weeks of 2026, state legislators across the country filed more than 300 bills related to data center regulation. Six states now have active moratorium proposals on the books: New York, Virginia, Oklahoma, Georgia, Maryland, and Vermont. Local governments in at least 14 states have already enacted their own moratoria. At least 18 states have introduced bills creating special rate classes for large energy consumers, and more than 230 environmental organizations have signed an open letter to Congress calling for a full national moratorium.

This is happening while hyperscalers commit nearly $700 billion in capex for 2026, almost double last year's spending. While Nvidia posts record $68 billion quarterly revenue and Jensen Huang tells Wall Street that AI infrastructure spending is "just the start." While Meta signs a 6-gigawatt GPU deal with AMD.

The industry sees two separate stories: unstoppable AI demand on one side, annoying political opposition on the other. PR teams are drafting community engagement plans. Lobbyists are working the state capitols. Microsoft is offering to cover full power costs and reject tax breaks as a goodwill gesture.

They're all missing the point. The Great Refusal isn't a political problem that needs better messaging. It's the democratic system telling the industry that the architecture itself is broken.

Communities Can Do Arithmetic

The conventional framing treats data center opposition as emotional, uninformed, or anti-technology. That framing is wrong, and the numbers prove it.

New York's residential electricity rates increased 43% between 2020 and 2025. The state's power grid interconnection queue jumped from 6,800 megawatts to 12,000 megawatts in four months. The New York Independent System Operator projects the state could face a 1,600-megawatt power shortage by 2030, largely due to data center demand.

Virginia, home to more data centers than any other state with roughly 643 facilities, gave up $1.6 billion in sales and use tax revenue last year, a 118% increase from the prior year. The Virginia Senate just proposed ending the exemption entirely and redirecting the revenue to tax rebates for residents. Georgia is expected to lose at least $2.5 billion to data center sales tax exemptions this year, 664% higher than the state's previous estimate. Dominion Energy's contracted capacity went from 21.4 gigawatts in July 2024 to 47.1 gigawatts by Q3 2025. More than doubled in 15 months.

These aren't emotional reactions. Rising electricity bills, strained grids, water consumption, noise, lost tax revenue, converted agricultural land. When Oklahoma Republican Senator Kendal Sacchieri says "I just don't understand my Republican Party on this one" while filing a moratorium bill, or when Oklahoma's Republican House Speaker Kyle Hilbert says "those days are over" for data centers cutting secret deals, they're reflecting a calculation, not an ideology.

A data center that consumes as much energy daily as a mid-sized city while employing a few dozen people and driving up everyone else's electricity bills is a bad deal for the host community. Communities are performing the cost-benefit analysis that the industry refuses to do publicly, and they're reaching the obvious conclusion: the costs of centralized AI infrastructure are concentrated and local, while the benefits are diffuse and largely extracted. That's not a messaging problem you can fix with better PR. That's a structural consequence of the architecture.

The Bipartisan Signal

One of the most telling features of The Great Refusal is its bipartisanship. Democrats sponsored moratorium bills in New York, Virginia, Georgia, and Vermont. Republicans sponsored bills in Maryland and Oklahoma. Bernie Sanders wants a national moratorium. Ron DeSantis is fighting data centers in Florida. Even Trump, who launched his presidency with executive orders to accelerate data center construction, recently posted that tech companies building data centers must "pay their own way."

In a political environment where virtually nothing gets bipartisan agreement, data center resistance is attracting support from progressive environmentalists and conservative populists simultaneously. That convergence matters because it means the opposition isn't coming from a single ideological camp that might lose the next election cycle. It's rooted in something more basic: the physical reality of what happens when you concentrate enormous energy demand in specific geographic locations.

When Michigan Democrat Erin Byrnes asks "who is actually benefiting from these massive data centers?" and Oklahoma Republican Kendal Sacchieri says "it's the same argument as green energy," they're arriving at the same conclusion from completely different starting points. The centralized model imposes concentrated costs on communities while extracting the value elsewhere.

That's not a left-right issue. That's a topology issue.

The Architecture Problem

The tech sector treats data center opposition as an externality to be managed. Better community relations. Tax concessions. Power purchase agreements. Microsoft promising to cover grid costs. Google acquiring energy companies. The Silicon Valley approach to building a "shadow grid" of private electrical supply. All of these are strategies for maintaining centralization in the face of its consequences.

But what if communities aren't wrong? What if the concentrated costs they're experiencing aren't incidental to the architecture but inherent to it?

Centralized AI infrastructure concentrates power demand because centralized computing concentrates computing. That's a tautology, but it's one the industry keeps trying to engineer around rather than rethink. A hyperscale data center consuming hundreds of megawatts in a single location isn't a design flaw that better community engagement can mitigate. It's the logical outcome of an architecture that assumes all AI workloads must happen in a handful of massive facilities.

Distribute the compute and you distribute the load. No single community bears the grid strain. No single power grid hits crisis thresholds. No single water supply gets drained for cooling. The costs spread with the infrastructure instead of concentrating at geographic chokepoints.

And the technology to do this already exists. Gartner predicts that by 2027, organizations will use task-specific small language models three times more often than general-purpose LLMs. By 2028, 30% of generative AI workloads will shift to domain-specific models running on-premises or on-device, up from less than 1% in 2024. The per-token cost differential between centralized API calls and local inference is getting better by orders of magnitude. Even Dell's CTO is acknowledging that running models locally will become the norm.

The 300+ bills filed in six weeks aren't just a political phenomenon. They're the democratic system doing what markets haven't: recognizing that the architecture itself needs to change.

What 300 Bills Actually Tell You

If you're a systems thinker, the legislative pattern is remarkably informative.

The bills aren't identical, and that's what makes them interesting. New York's S.9144 targets facilities over 20 megawatts with a three-year moratorium. Oklahoma's SB 1488 targets facilities over 100 megawatts with a moratorium until 2029. Virginia's proposal pauses permits until all pending interconnection requests are fulfilled, which is a functionally indefinite moratorium given the queue doubled in four months. Maryland's moratorium triggers lift once legislation requiring colocation with power generation is enacted.

The diversity of approaches reveals a common diagnosis applied through different local constraints. Every bill responds to the same underlying problem, whether it focuses on energy, water, tax incentives, or environmental impact: the concentrated footprint of centralized infrastructure. The specifics differ because local conditions differ, but the pattern is consistent.

Virginia's $1.6 billion annual data center tax break is on the chopping block. Georgia's legislature is looking at repealing data center tax exemptions entirely. The momentum has shifted from "how do we attract data centers" to "how do we protect our communities from data centers."

That's a phase transition, and in complex systems, phase transitions don't reverse easily.

The $700 Billion Collision

The timing makes this particularly consequential. This isn't happening during a lull in AI investment. It's happening at the exact moment the industry is committing to its most aggressive buildout ever.

Yesterday, Nvidia posted $68.1 billion in quarterly revenue, up 73% year over year. Jensen Huang told analysts that AI infrastructure spending is just beginning. The five largest hyperscalers plan to spend between $660 and $700 billion on capex this year, nearly double 2025. AI capex now accounts for nearly a fifth of US GDP growth, according to Pantheon Macroeconomics.

Every dollar of that $700 billion assumes the compute happens in centralized facilities. Every data center in the pipeline assumes communities will accept the concentrated costs. Every capex forecast assumes the political environment won't materially constrain the buildout.

Those assumptions are breaking down in real time. The industry is pouring money into an architecture that the democratic process is starting to reject, not because communities are anti-technology, but because communities can do arithmetic. A Meta facility under construction in Louisiana will consume as much energy daily as New Orleans while employing a few dozen people and requiring at least three new fossil-fueled power plants to operate. No amount of tax concessions changes that math, because the architecture makes the math bad. Concentrating compute concentrates costs.

The Path Nobody's Taking

The frustrating thing about The Great Refusal is that both sides are correct within their own framing, and both are missing the synthesis.

Communities are right that centralized data centers impose unacceptable concentrated costs. The industry is right that AI workloads require massive infrastructure investment. What neither side is saying is that AI doesn't require centralized infrastructure. The demand is real. The architecture is a choice.

75% of enterprise data is already generated outside traditional data centers, in factories, hospitals, retail locations, vehicles. Edge inference eliminates round-trip latency, egress costs, and jurisdictional data-transfer complexities. Small language models running locally deliver comparable performance at a fraction of the cost for most enterprise use cases, and federated approaches to training avoid the need to move massive datasets to central locations at all.

A distributed AI infrastructure would spread both the compute and the footprint across the landscape instead of concentrating it. The power demand doesn't disappear, but it distributes. No single grid bears the full load. No single community absorbs the externalities. The economics of AI still work. The physics of community impact change fundamentally.

The moratoria buy time, which is valuable. But without an architectural alternative, the pause just delays the same fight. Communities will eventually need AI infrastructure. The question is whether that infrastructure looks like a handful of gigawatt-scale facilities or a widely distributed network of smaller, local compute resources integrated into existing infrastructure.

The Signal

Three hundred bills in six weeks. Six state moratoria. Fourteen states with local construction pauses. Bipartisan support from Bernie Sanders to Ron DeSantis. Interconnection queues doubling while legislators scramble to hit the brakes.

The industry hears noise. I hear signal.

For twenty years, the tech industry operated on the assumption that centralization was the natural order. Compute was expensive, so you concentrated it. Data was "free" to move, so you shipped it to the compute. The economics rewarded scale, and scale meant bigger and more centralized.

Those assumptions are inverting. Compute is getting cheaper and more distributable. Data movement is getting more expensive: economically, legally, and now politically. The external costs of centralization are being priced in through legislative action rather than market mechanisms, which is what happens when markets fail to price in externalities on their own.

The companies that figure this out will build for a world where AI infrastructure is distributed by design, not centralized by default. The companies that don't will keep spending billions on assets that communities increasingly won't permit and regulations increasingly restrict.

Three hundred bills is a lot of signal. The question is whether anyone in the industry is listening.

Want to learn how intelligent data pipelines can reduce your AI costs? Check out Expanso. Or don't. Who am I to tell you what to do.*

NOTE: I'm currently writing a book based on what I have seen about the real-world challenges of data preparation for machine learning, focusing on operational, compliance, and cost. I'd love to hear your thoughts!