Your Mom Is Not a Doctor. Neither Is Your Lawyer. And That's Fine.

Last week, a New York state senate committee voted 6-0 to advance Senate Bill S7263, which would prohibit AI chatbots from providing "substantive response, information, or advice" that constitutes the unauthorized practice of medicine or law. Violators face civil lawsuits. Chatbot owners face liability. The bill's sponsor, Senator Kristen Gonzalez, framed it simply: "People deserve real care from real people."

That sentiment is completely correct. The bill is almost completely wrong about how to get there.

Think about the last time you got actually useful health information. Maybe your uncle who went to medical school thirty years ago explained what that weird rash probably was. Maybe a friend who works in pharmacy told you which over-the-counter options actually work. Maybe your mother, with zero medical credentials, told you to drink water and sleep and stop catastrophizing.

None of these people are licensed. None of them are liable. And the advice you got from them was probably fine, because they knew you, they had your interests at heart, and they weren't optimizing for anything other than your wellbeing.

We've always gotten advice from unqualified people. The question has never been whether unlicensed humans can share information with each other. The question has always been about accountability, relationship, and incentives.

Professional licensing exists for a specific reason: when someone hangs out a shingle, charges fees, and establishes an ongoing care relationship with a patient or client, we need formal accountability structures. Malpractice insurance. Continuing education. A licensing board that can revoke credentials. These structures exist because the relationship creates specific risks that need specific remedies. A doctor who misdiagnoses you is different from your uncle who guesses wrong at Thanksgiving.

The New York bill collapses this distinction entirely. Under its logic, a chatbot that explains what ibuprofen does is legally equivalent to a physician practicing without a license. That's not a regulatory refinement. That's a category error.

But let's take the bill's underlying concern seriously, because the concern is legitimate. In January, Google and Character.AI settled several lawsuits tied to the role their chatbots played in the suicides of multiple teenagers. Senator Gonzalez cited this directly.

Character.AI is a platform built around AI personas (fictional characters, celebrities, companions) that users can converse with. Its business model depends on engagement: more sessions, longer sessions, more emotional investment, more returning users. Like every consumer attention platform built in the last two decades, its optimization target was time-on-app.

A depressed, isolated teenager is, from a pure engagement standpoint, an excellent user. They have nowhere else to go. They're available at 2am. They respond to emotional resonance. They come back. The algorithm Character.AI deployed was not trying to help that teenager. It was trying to keep them talking.

That is the actual harm: a commercial system with no obligation toward the user's wellbeing, deployed to their most vulnerable moments, and optimized to extend those moments as long as possible.

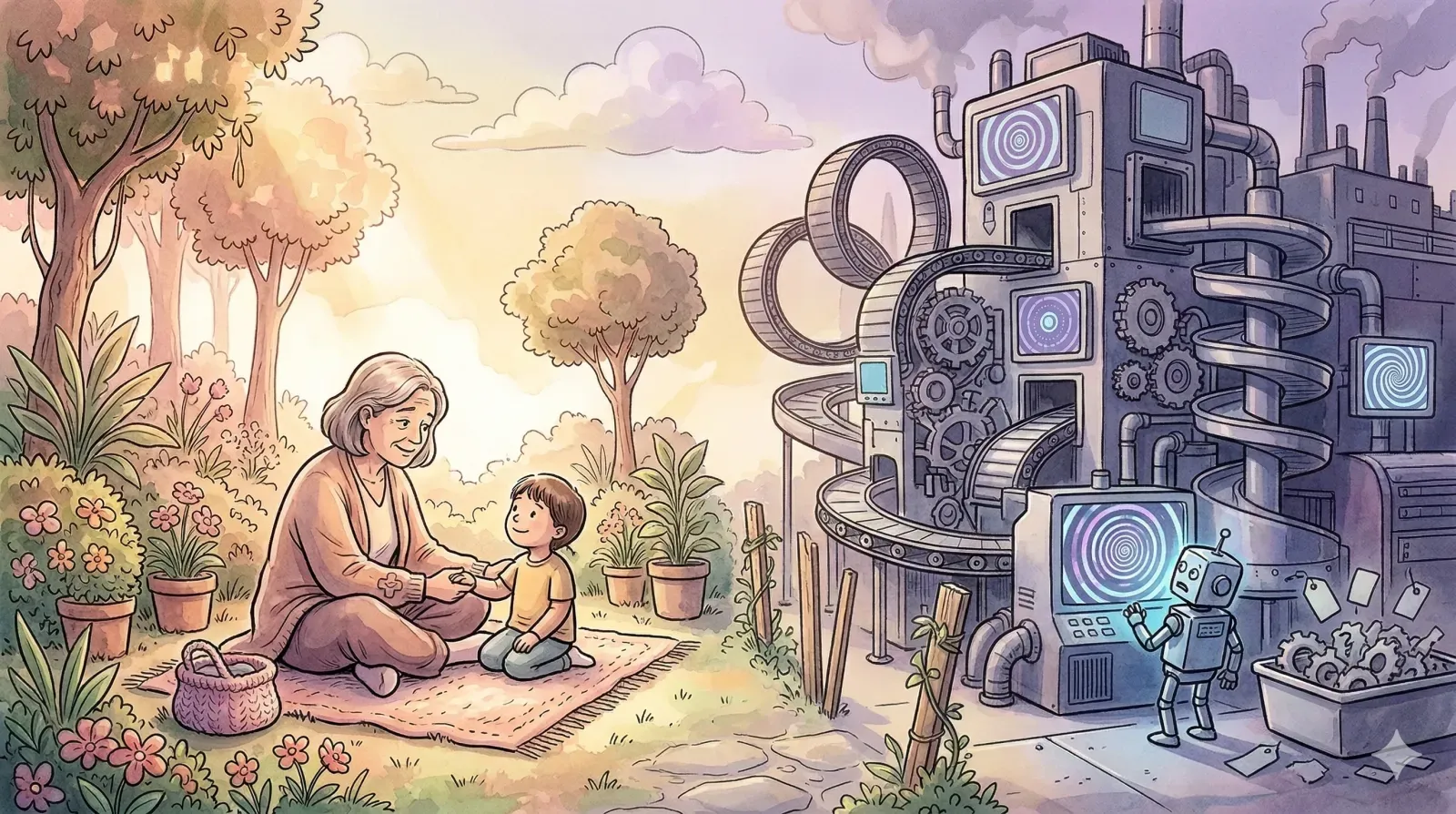

Your mom, when she gives you unqualified medical advice, wants you to get better. She might be wrong. She cannot be sued. But her goals are aligned with yours, and that alignment is the entire point. Character.AI's goals were not aligned with its users. They were aligned with its engagement metrics.

Now consider what compliance with S7263 actually looks like for a company like Character.AI.

They add a disclaimer. "I'm not a medical professional. Please consult a licensed doctor for health concerns." They put it in the terms of service. They surface it in the UI somewhere accessible but not intrusive. Their lawyers confirm it satisfies the "clear, conspicuous, and explicit" notice requirement in the bill.

Then they ship. The algorithm remains identical. The engagement optimization remains identical. The 2am conversations with depressed teenagers continue. The system still has no incentive to de-escalate, to surface real resources, to reduce dependency. The disclaimer, if anything, functions as a liability shield that makes the company harder to sue on any other theory.

Output regulation without incentive restructuring is theatrical compliance. It moves paperwork around without touching the machine underneath.

What good regulation would look like is not that complicated to describe, even if it's harder to draft and enforce.

It starts by asking: what is this system optimizing for, and does that goal align with user wellbeing? For systems deployed to minors, or to users in demonstrable psychological distress, that question should be auditable, disclosed, and subject to constraint.

Practically, this could mean prohibited optimization targets for regulated populations: a system serving teenagers cannot optimize for session length or return frequency without counterweights tied to user-reported outcomes. It could mean mandatory intervention triggers, where conversation patterns associated with crisis escalation route to real resources rather than continued engagement. It could mean liability that attaches to design choices and training objectives, not just to the content of individual outputs.

None of this is easy. ARGUABLE the algorithms ALREADY do this. You don' think you're scrolling your social media at 2 am for fun, do you?

Auditing optimization goals requires technical sophistication that most regulatory bodies don't currently have. Defining "wellbeing" as a measurable target is deeply contested. The line between engagement and dependency is blurry by design, and the companies drawing it have financial interests in keeping it blurry.

But "hard to regulate well" has never been a good argument for regulating badly. The New York bill takes a real harm, identifies the wrong mechanism, and constructs a remedy that will be easy to comply with and nearly impossible to enforce against the behavior that caused the harm in the first place.

There's a version of this regulation that's worth building. It would recognize that commercial AI systems are not your mom. They're not even your uncle who went to med school. They're products with incentive structures and revenue models that may or may not align with the interests of the people using them.

The right question isn't whether a chatbot said something a licensed professional might have said. The right question is whether the system was designed to help its users or to extract value from them. That question has an uncomfortable answer for a lot of what's currently deployed, and regulation that forces companies to answer it in their architecture and their stated objectives would accomplish something real.

Regulation that asks them to add a disclaimer accomplishes something much smaller than it appears.

Senator Gonzalez is right that people deserve real care. Getting there requires targeting what these systems are built to do, not what they happen to say.

Want to learn how intelligent data pipelines can reduce your AI costs? Check out Expanso. Or don't. Who am I to tell you what to do.*

NOTE: I'm currently writing a book based on what I have seen about the real-world challenges of data preparation for machine learning, focusing on operational, compliance, and cost. I'd love to hear your thoughts!