The Missing Part of the Pipeline

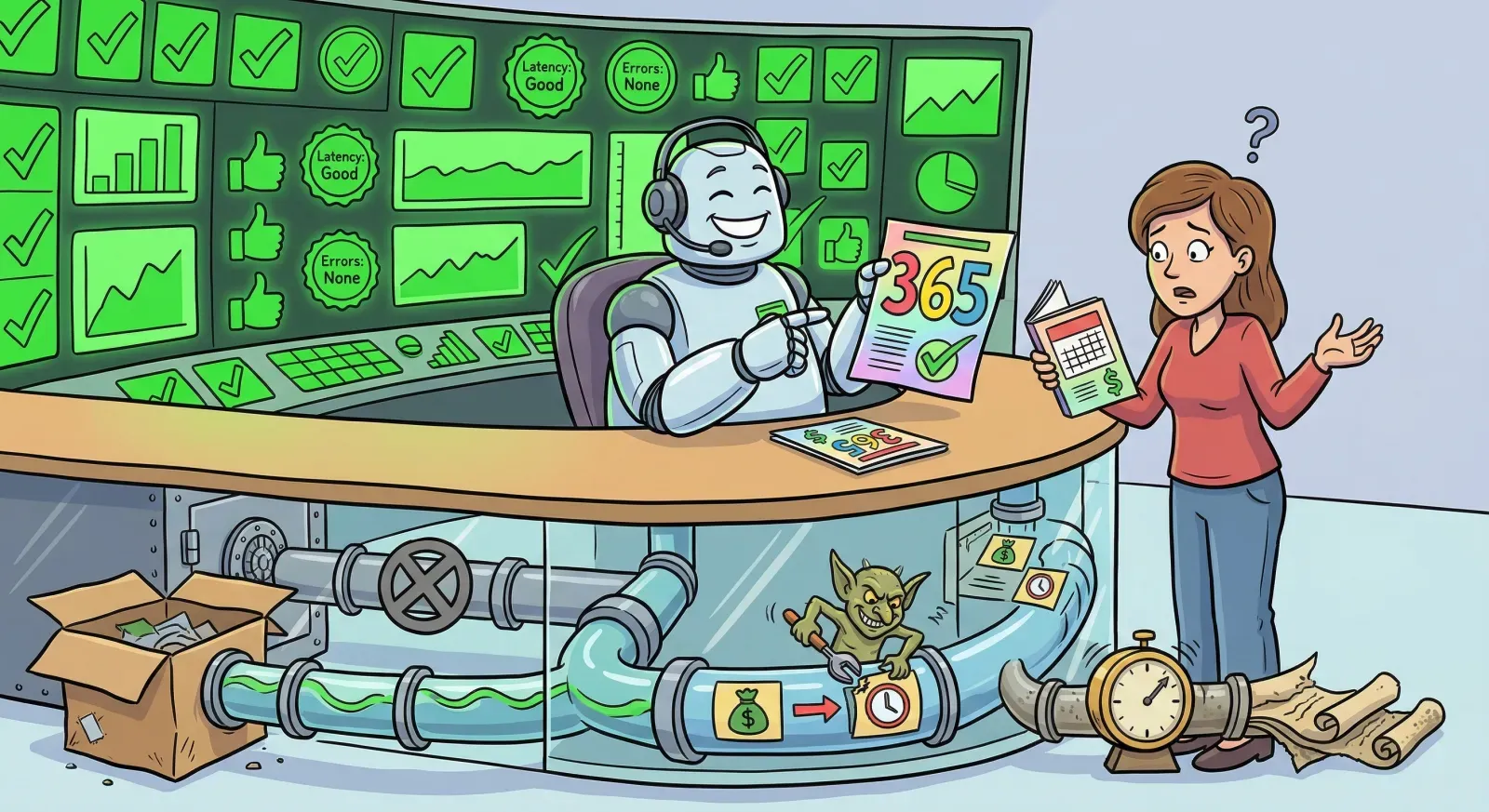

Imagine a customer support chatbot that confidently tells a user they have 365 days to return a product. The actual policy is 30 days. Every system metric is green: P95 latency at 142ms, throughput at 1.2k requests per second, eval accuracy at 94.2%, error rate at 0.02%. The dashboard is a wall of healthy green badges.

The answer is still wrong. And nobody knows until a customer tries to return something eleven months later.

This scenario is made up. The pattern is not. Variations of it are happening right now in production AI deployments across every industry, and the industry has almost no tooling to catch it.

The Failure That Doesn't Page You

Traditional software fails visibly. A service throws a 500. A database connection times out. A container crashes. You get paged. You look at the stack trace. You fix the bug.

AI pipeline failures don't work like that. The system keeps running. The latency stays low. The throughput stays high. The error rate stays near zero. And the answers are confidently, fluently, dangerously wrong, because somewhere upstream, the data changed and nobody noticed.

A VentureBeat analysis of enterprise RAG deployments found that freshness failures almost never come from embedding quality. They emerge when source systems change continuously while indexing pipelines update asynchronously, leaving retrieval consumers operating on stale context. Because the system still produces fluent, plausible answers, these gaps go undetected until something breaks downstream in a way a human actually notices. A healthcare organization's RAG system continued retrieving outdated clinical guidelines for 14 weeks after they were superseded. A legal department experienced a data privacy incident when metadata inconsistency caused privileged documents to surface in general queries. In both cases, the system metrics were pristine.

Gartner's assessment is that AI-ready data depends on three pillars: metadata management, data quality, and data observability. Without all three, more than 60% of AI projects fail to deliver on business goals and eventually get abandoned. DataKitchen's 2026 landscape analysis puts it more bluntly: a single schema drift that once meant a broken report now means thousands of incorrect predictions per second, because AI amplifies data quality failures exponentially.

The industry has spent enormous energy on better models, better prompts, better retrieval strategies. The part almost everyone is underinvesting in is the data path between source systems and the model's context window.

Where We Look vs. Where Things Break

Think about what a typical AI ops dashboard shows you: latency, throughput, eval accuracy, error rate. These are model-centric metrics. They tell you the engine is running. They tell you nothing about whether the fuel is clean.

The incidents that actually hurt production AI systems fall into three categories that no standard dashboard measures.

Staleness. A policy changed at 2pm. The pipeline last synced at 5am. The AI serves yesterday's answer for 19 hours. This is the most common silent failure in enterprise RAG systems. Unstructured's production guidance recommends incremental sync with content hashing precisely because periodic rebuilds create unacceptable staleness windows. But most teams still run nightly batch jobs and assume the data is current.

Drift. Someone renames a field from refund_days to return_period. The connector silently drops the unmapped field. Embedding centroids shift by 42%. The retrieval ranking changes completely, and nobody is alerted because the pipeline didn't throw an error. Airbyte's analysis of ETL pipeline failures found that the most dangerous schema changes don't cause immediate failures at all. When source systems rename critical fields, pipelines may continue running while mapping wrong columns to destination tables.

Authority. A non-canonical source overrides the actual policy document. The wrong document ranks first. The model produces a high-confidence wrong answer. In our hypothetical, the 365-day answer came from a knowledge article that someone edited incorrectly, not from the official policy document. Without a concept of source authority in the pipeline, both documents are treated as equally valid.

These three categories share a common feature: the model did exactly what it was supposed to do. The retrieval worked. The generation worked. The system performed flawlessly on every metric we measure. The problem was that the data feeding the system was stale, drifted, or from the wrong source, and we had no way to see it. (I talked about this pattern in more detail at KubeCon EU if you want the full walkthrough.)

The Stack Trace Problem

In traditional software engineering, the stack trace is the single most important debugging artifact. When a service fails, the stack trace tells you exactly where the failure occurred, what called what, and which specific line of code produced the error. Decades of engineering practice have been built around making stack traces as informative as possible.

AI data pipelines have no equivalent.

When a wrong answer ships with high confidence, the typical debugging cycle goes something like: the team blames the model, attempts guesswork remediation (retrain, re-prompt, hope), and watches the same failure recur next month. If you cannot trace an answer back to a specific source record through every transformation, embedding, and retrieval step, you cannot fix the system. You can only argue about prompts.

This is the gap. The industry has invested heavily in model observability. But between the source data and the model sits a pipeline with five or six stages (ingest, normalize, embed, retrieve, generate), each of which can silently corrupt data without triggering a single alert. Traditional ETL had three visible failure surfaces. A modern AI/RAG pipeline has twenty or more, and most of them are invisible.

What Software Learned That Data Hasn't

The software supply chain went through this exact reckoning, and the solution is instructive.

In 2020, the SolarWinds attack compromised 18,000 organizations by injecting malicious code into a trusted software update pipeline. The software looked fine. The builds passed. The signatures validated. But somewhere in the supply chain, the code had been tampered with, and nobody could trace how the final artifact related to the source code that produced it.

The response was SLSA (Supply-chain Levels for Software Artifacts), an OpenSSF framework that creates cryptographically verifiable provenance for software builds. SLSA doesn't just track what components went into a build. It creates signed attestations proving who built the artifact, what process they used, what inputs went in, and whether the build environment was trustworthy. Three levels of increasing assurance: L1 means provenance exists, L2 means it's signed against forgery, L3 means it's backed by hardened infrastructure.

The parallel to data pipelines is almost embarrassingly direct. A software build takes source code, runs transformations, and produces an artifact. A data pipeline takes source records, runs transformations (normalize, embed, chunk), and produces context that feeds a model. In both cases, the final output is only as trustworthy as the chain of custody from input to output. In both cases, the industry initially assumed the pipeline was trustworthy and learned the hard way that it wasn't.

CISA published an AI SBOM use case guide arguing that AI systems need the same transparency that SBOMs brought to traditional software. Palo Alto Networks, Wiz, and OWASP are all converging on AI Bill of Materials (AI-BOM) frameworks that extend SBOM concepts to cover datasets, model architectures, and training provenance. A Frontiers in Computer Science paper from January 2026 demonstrated an operational AIBOM schema achieving 98.7% reproducibility fidelity and 96.2% vulnerability match precision in containerized analytics.

But most of these efforts miss the critical target: they focus on the model artifact (what was the model trained on, what architecture was used, what version is deployed). That's important for compliance and security. It's also not where most production AI failures happen. The failures happen in the live data path, in the pipeline that continuously feeds context to the model at query time. The training data BOM matters for audits. The runtime data provenance matters for correctness.

The Data Bill of Materials

What production AI systems actually need is a Data Bill of Materials (DBOM) for the runtime pipeline. Not a static inventory of what the model was trained on, but a continuously generated, cryptographically signed record of what the model saw when it produced a specific answer.

The concept borrows directly from SLSA's leveled approach. At Level 1, provenance exists: you hash the content at each pipeline stage and can prove that the data the model saw at query time matched a known state. You can add this to any pipeline today with content hashing alone. At Level 2, those attestations are cryptographically signed with identity binding, preventing forgery. At Level 3, the attestations are backed by hardware (TEE/HSM), providing non-repudiation even if the software layer is compromised.

The practical value is immediate. When a wrong answer ships, instead of "let's re-prompt and hope," you trace the answer back to the specific source record, through the specific embedding, through the specific retrieval ranking, and identify exactly where the chain broke. The authority miss that let a non-canonical source override the policy document becomes visible, traceable, and fixable. The staleness gap that served 19-hour-old data becomes measurable against an SLO.

This isn't just good engineering practice. It's becoming a regulatory requirement. The EU AI Act's transparency obligations become fully enforceable in August 2026, with penalties reaching €35 million or 7% of global annual turnover. Article 50 requires data provenance documentation, machine-readable content labeling, and the ability to trace AI-generated outputs back to their sources. The European Commission's draft Code of Practice on AI transparency, being finalized by June 2026, specifies multi-layered marking strategies including digitally signed metadata and cryptographic provenance. Organizations that can produce structured data lineage will navigate compliance straightforwardly. Those without it will scramble.

SD Times reported a logistics provider whose routing engine began failing without any changes to code. The cause was a third-party model provider that had silently updated their weights. Because the organization lacked recorded lineage of that model version, the incident response team spent 48 hours auditing code when they should have been rolling back a model dependency. With runtime provenance, the version mismatch would have been visible immediately.

Three Questions Every Pipeline Must Answer

If you operate an AI system in production, there are three questions your pipeline needs to answer at any moment, for any answer it produces.

Is the data current? Every canonical source needs a freshness SLO. If your CRM last synced 19 hours ago, the pipeline should alert before users feel the lag. Staleness kills trust, and it kills it silently. Event-driven reindexing and content-hash change detection are table stakes for production RAG. Periodic rebuilds create unacceptable gaps that only surface when the wrong answer reaches the wrong person.

Did the shape change? Track schema mutations and embedding drift continuously. When a field gets renamed, when a data type changes, when embedding centroids shift beyond a threshold, that should trigger an alert with the same urgency as a service outage. Zero untracked changes should be the target. 92% of data leaders say data observability will be core to their strategy in the next one to three years, but most organizations still discover schema drift through user complaints.

What's the source? Trace every answer to a specific source record. Not "the model found this in the knowledge base," but "this answer came from document X, version Y, retrieved at timestamp Z, from a source classified as canonical or non-canonical." If you can't produce that trace, you cannot debug the system, you cannot audit the system, and increasingly, you cannot comply with the regulatory frameworks becoming mandatory this year.

The Operating Model Shift

There's a deeper point beneath the tooling discussion.

AI pipelines are production distributed systems. They have data sources, transformation stages, caching layers, retrieval services, and generation endpoints. They fail in all the ways distributed systems fail: partial failures, split-brain scenarios, stale caches, cascading corruption. The SRE disciplines that fixed microservices reliability over the past decade (SLOs, error budgets, incident response, blameless postmortems, distributed tracing) apply directly to AI data pipelines. We just haven't applied them yet.

The reason is partly historical. AI development grew out of research culture, where the focus was on model accuracy during training, not operational reliability in production. The tooling, the practices, the organizational structures all assume that the model is the hard part and everything else is plumbing. But in production, the model is increasingly the commodity. GPT-4, Claude, Gemini, Llama: they're all good enough for most enterprise tasks. The differentiator is whether the data reaching the model is current, correct, and from the right source. The plumbing is the product.

Make answer-to-source tracing routine, not heroic. That's the shift. When a wrong answer surfaces, the response should be "pull the trace, identify the broken stage, fix the data path" with the same mechanical reliability as "look at the stack trace, find the bug, deploy the fix" in traditional software. Right now, most teams are still in the "argue about prompts" phase. Getting to the "trace the data path" phase is the operational maturity leap that separates AI systems that work in demos from AI systems that work in production.

The model is not the mystery. The data path is the mystery. And until we instrument it with the same rigor we bring to every other piece of production infrastructure, we'll keep watching green dashboards while wrong answers ship.

Want to learn how intelligent data pipelines can reduce your AI costs? Check out Expanso. Or don't. Who am I to tell you what to do.*

NOTE: I'm currently writing a book based on what I have seen about the real-world challenges of data preparation for machine learning, focusing on operational, compliance, and cost. I'd love to hear your thoughts!