One Year of Liberation Day: What the Tariff Rollout Actually Revealed About AI Infrastructure

One year ago today, President Trump stood in the Rose Garden and imposed double-digit tariffs on virtually every product the United States imports. He called it "Liberation Day." Within weeks, tariff policy had changed more than 50 times. Rates spiked to 21.5%, fell, spiked again. The Supreme Court eventually ruled the emergency powers invocation illegal in February 2026. Customs officials are now working out how to refund roughly $166 billion in wrongly collected duties.

The impacts, for good and bad, are well-documented. Manufacturing shed 89,000 jobs. The trade deficit hit an all-time high. Inflation in February 2026 was 2.4%, with Fed Chair Powell explicitly attributing elevated goods-sector inflation to tariff effects. I'm not going to relitigate any of that.

What I want to talk about is what this year-long stress test revealed about the physical architecture of AI infrastructure, because it exposed a fragility that has nothing to do with which political party you vote for and everything to do with how we chose to build.

The $34 Billion Exemption

In the middle of the most aggressive trade war since Hawley-Smoot (Hi Ferris Beuller fans!), the administration quietly carved out the single largest tariff exemption in the entire regime: $34 billion per month in computers and parts. GPUs, servers, networking gear. The backbone of the AI buildout got a pass while farmers, manufacturers, and small businesses absorbed the full impact.

This exemption wasn't generosity. It was necessity. The six largest companies on Earth (NVIDIA, Microsoft, Apple, Alphabet, Amazon, Meta) are all American tech giants deeply embedded in the AI ecosystem, with a combined valuation (as of April 2026) exceeding $15 trillion. Their investment plans depend on importing components from Taiwan, South Korea, Vietnam, and Mexico through supply chains that took decades to optimize. You don't blow up $15 trillion in market cap to make a point about trade balances.

But the exemption only covered the chips themselves as standalone imports. Most semiconductors arrive inside assembled products like servers, which remained subject to tariffs. Construction materials, cooling systems, transformers, backup generators, copper cabling: all still taxed. One analysis found that tariffs on electric static converters alone added $1.9 billion in costs on top of $5.5 billion in 2024 imports. Tariffs on data processing machines more than doubled costs, with a 114% rate imposing $3.9 billion in additional levies.

The result was an industry that got a reprieve on its most expensive component while everything around it got more expensive to build, cool, power, and maintain.

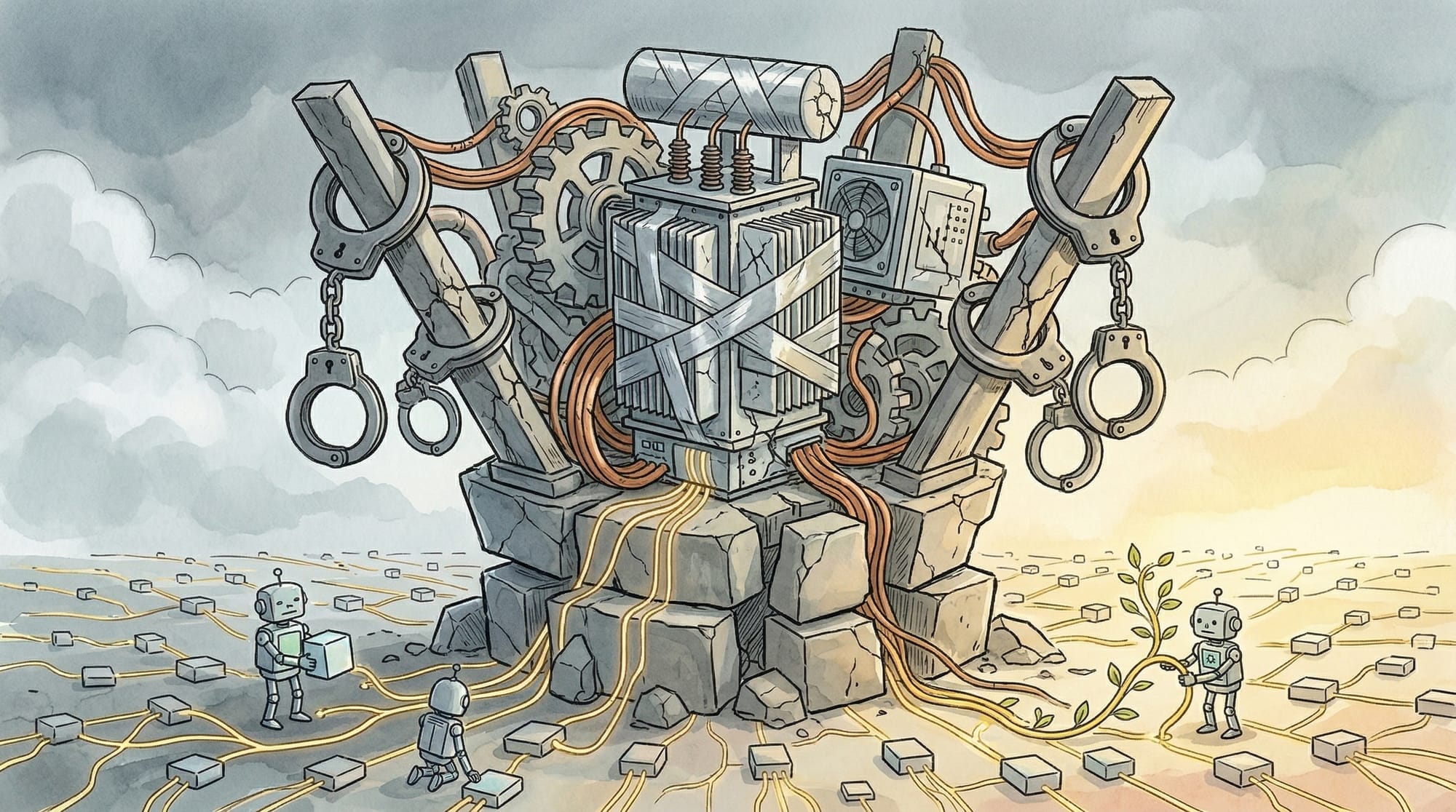

Concentration Creates Fragility

Let's step back and look at what this reveals structurally.

The AI industry is in the middle of the largest infrastructure buildout in technology history. Analysts project $400 to $450 billion in AI infrastructure spending by year end, a 65% jump from 2024 levels. Data center construction in the U.S. now exceeds $41 billion per year, a 200% increase over three years. America is spending nearly as much on data centers as on office buildings.

And the vast majority of that spending is concentrated in a handful of massive facilities, each consuming 100+ megawatts of power, each dependent on the same globalized supply chains for transformers, cooling equipment, and construction materials. Each gigawatt of AI-optimized data center capacity costs $45 to $55 billion to construct, nearly triple the cost of a standard facility.

This is a classic single-point-of-failure architecture, but now at national scale. When you concentrate $400 billion of infrastructure in facilities that depend on imported transformers (over 80% of large power transformers are imported into the U.S.), imported cooling systems, imported construction steel, and imported copper, you've created an infrastructure base that is exquisitely sensitive to trade policy, geopolitical disruption, and supply chain shocks. Liberation Day proved this. One major U.S. enterprise faced $1.96 billion in projected additional yearly tariff exposure on data center components alone, and dozens of others had exposure between $100 million and $1 billion annually.

The exemption was the duct tape. Remove it, and the economics of the entire centralized buildout shift overnight.

The Inference Shift Changes the Calculus

The concentration bet made some sense when AI infrastructure was primarily about training. Training massive models requires enormous, tightly coupled GPU clusters with high-bandwidth interconnects. You genuinely need centralized facilities for that. The physics of training workloads favor concentration.

But the center of gravity is shifting. Bessemer Venture Partners' 2026 AI Infrastructure Roadmap notes that inference workloads now rival and in many cases exceed training in both compute demand and economic importance. Jensen Huang said it directly at GTC 2026: "the inflection point of inference has arrived."

Inference has fundamentally different economics than training. It's latency-sensitive (users are waiting for answers). It's distributed by nature (the data and users are everywhere, not concentrated in Northern Virginia). It needs to run continuously, not in burst training jobs. And it scales with the number of users and devices, not the number of parameters in your model.

NVIDIA's own AI Grid reference architecture, announced at GTC, demonstrates this shift. Comcast benchmarked a voice language model across four distributed sites versus a single centralized cluster. The distributed deployment maintained sub-500ms latency at P99 burst traffic while hitting 42,362 tokens per second, an 80.9% throughput gain over baseline. The centralized deployment actually lost throughput under identical burst conditions. Cost-per-token dropped 76%.

When the company that sells you the biggest GPUs on the planet builds a reference architecture for distributing inference across telecom edge nodes, the architectural argument is settled. The question is just how long the industry takes to catch up.

What a Distributed Architecture Actually Buys You

Distributed infrastructure isn't just a theoretical preference. In the context of what we learned from Liberation Day, it addresses the fragility at the root.

Supply chain resilience. Smaller, modular deployments don't require imported large power transformers with 18-month lead times. They can use standard commercial power infrastructure, locally sourced construction materials, and commodity cooling. A 50-kilowatt edge deployment doesn't have the same supply chain profile as a 500-megawatt hyperscale facility. The tariff exposure surface shrinks by orders of magnitude.

Geographic diversity. 75% of enterprise-managed data is now created and processed outside traditional data centers. Running inference where data is generated eliminates the need to move terabytes across networks into centralized facilities, and it eliminates the dependency on a handful of regions with sufficient power grid capacity. Zededa is already running edge AI deployments across more than 100 countries, in maritime operations, manufacturing, and retail environments that no hyperscale data center will ever serve.

Regulatory alignment. 93% of executives surveyed by IBM's Institute for Business Value say factoring AI sovereignty into business strategy will be essential in 2026. Half worry about over-dependence on compute resources concentrated in specific regions. The EU AI Act's transparency obligations become fully enforceable in August 2026. Processing data locally, in the jurisdiction where it was created, is not just operationally efficient. It's becoming a compliance requirement.

Cost structure. The economics of centralized inference are brutal at scale. You're paying for the network to move data in, the compute to process it, the network to move results out, and the massive facility overhead that supports all of it. Distributed inference collapses that cost structure by processing at the source. The Comcast benchmark showed a 76% reduction in cost-per-token. At inference volumes that are growing exponentially, that difference compounds fast.

The Lesson Isn't About Tariffs

I want to be clear about what the argument is and isn't. This isn't about whether tariffs are good policy or bad policy. Reasonable people disagree about trade, and this blog isn't the place to settle that.

The argument is about architectural resilience. Liberation Day was a stress test, and the concentrated infrastructure model failed it. The industry needed an emergency exemption to keep functioning. When tariff policy changes more than 50 times in a year and businesses report they simply could not plan, any infrastructure strategy that depends on policy stability in an unstable world is fundamentally fragile.

Distributed systems engineers have known this for decades. You don't build a system that depends on a single coordinator staying up. You don't route all traffic through a single network path. You don't store all your data in a single region. These are basic resilience principles that the industry applies to every other layer of infrastructure. But for AI compute, we somehow decided to concentrate everything in a few massive facilities dependent on globalized supply chains, and then act surprised when a single policy change threatens the whole thing.

The alternative isn't theoretical. The hardware exists (a $249 Jetson runs LLMs and vision models at the edge). The reference architectures exist (NVIDIA's AI Grid). The early deployments exist (Comcast, Maersk, T-Mobile). The economics work (76% cost reduction at the inference layer).

What's missing is the connective tissue: the data pipelines, orchestration, and observability that let you manage thousands of distributed inference nodes with the same operational maturity you'd expect from a centralized deployment. That's the real infrastructure gap, and it's the same gap whether the policy environment is stable or chaotic.

Liberation Day was a year ago. The exemption is still in place. The next disruption (and there is always a next disruption) might not come with a carve-out attached.

Build accordingly.

Want to learn how intelligent data pipelines can reduce your AI costs? Check out Expanso. Or don't. Who am I to tell you what to do.

NOTE: I'm currently writing a book based on what I have seen about the real-world challenges of data preparation for machine learning, focusing on operational, compliance, and cost. I'd love to hear your thoughts!