The Grid Said No

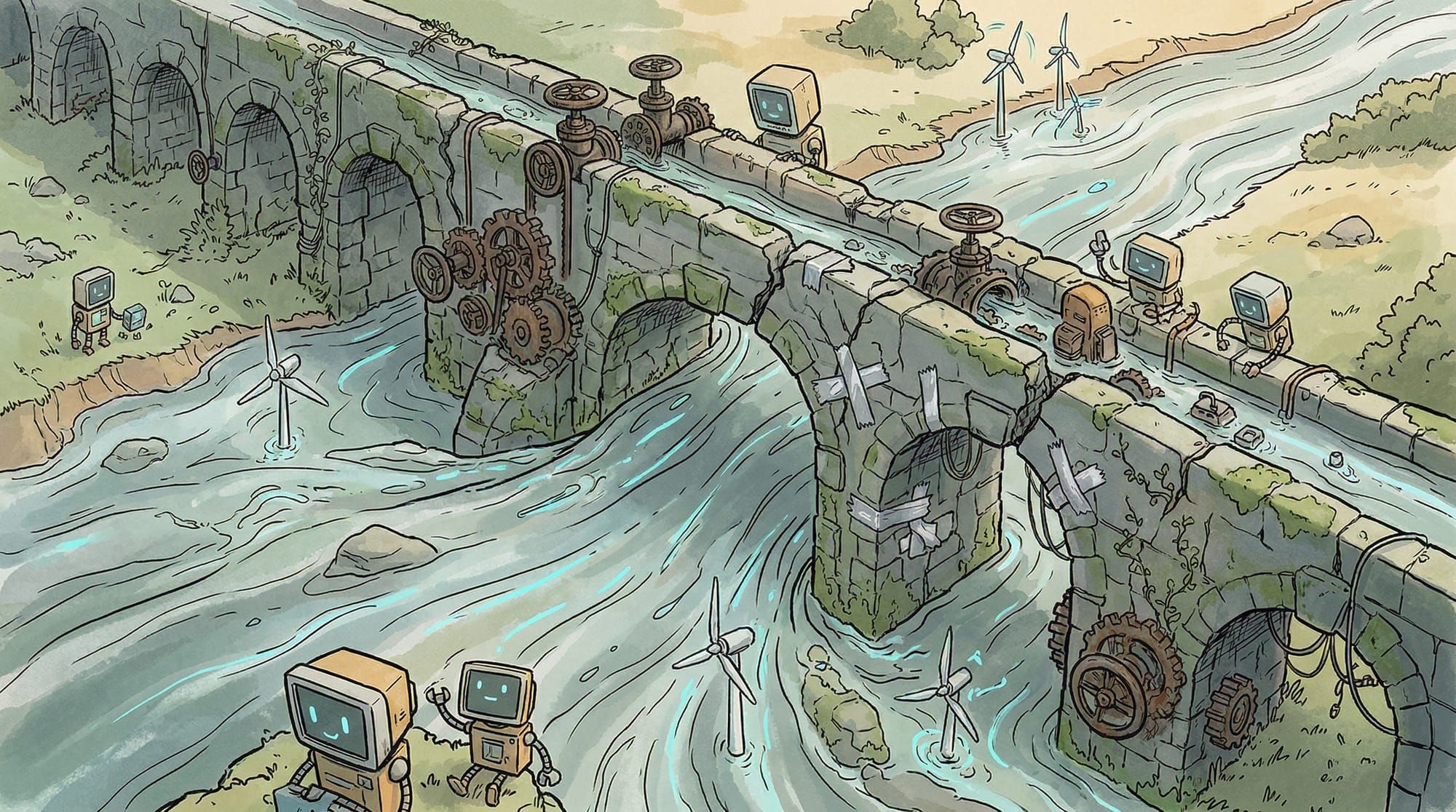

Eleven gigawatts of announced data center capacity sits frozen in 2026 without construction underway. Not because of chip shortages. Not because of capital constraints. Because the electrical grid cannot deliver the power.

That number deserves a moment. Eleven gigawatts is roughly the output of eleven nuclear power plants. It's enough to power every household in New York City twice over. And it's sitting in limbo because the infrastructure required to connect these facilities to the grid simply doesn't exist yet, and won't for years.

According to Bloomberg, close to half of all planned US data center builds in 2026 are projected to be delayed or canceled. Not scaled back. Not postponed by a quarter. Delayed or canceled entirely, because the grid infrastructure that would feed them cannot be built fast enough. Fortune described it as "a bend in the trajectory", which is a polite way of saying the industry hit a wall it didn't see coming.

This is worth paying attention to, because the AI industry has spent the last two years treating chips as the bottleneck. NVIDIA's stock price, the Blackwell allocation wars, the sovereign AI chip strategies. All of that assumed that if you could get the GPUs, you could deploy them. The grid is saying otherwise.

The Maturity Mismatch

Engineering News-Record ran a piece this month that reframes the problem clearly: grid access, not land, has emerged as the primary bottleneck for data center construction. The distinction matters. Land is a market problem. Grid access is a physics and logistics problem with fundamentally different time constants.

IT hardware manufacturing can scale within 12 to 24 months. NVIDIA is already shipping Blackwell at volume. AMD's MI400 is ramping. The supply side of compute has responded to demand with the speed you'd expect from semiconductor companies running on two-year product cycles.

Power grid infrastructure operates on a completely different timeline. High-voltage transformers, the critical components that step down transmission-level power for data center consumption, have multi-year lead times. The manufacturers that build them haven't scaled production because these are specialized, expensive industrial components with limited historical demand growth. Switchgear, breakers, and substation equipment face similar constraints. You can order a rack of H100s and have it delivered in weeks. Ordering a high-voltage transformer and you're looking at 24 to 36 months, minimum.

This creates what the industry is calling a maturity mismatch: the compute supply chain runs on semiconductor timelines while the power supply chain runs on heavy industrial timelines. The gap between them is measured in years, not quarters. And it's getting worse, because every new data center announcement adds to the interconnection queue without adding any new grid capacity.

Power constraints are extending construction timelines by 24 to 72 months. Facilities that need only 12 to 18 months of construction sit frozen at the announcement stage because they can't secure grid connections. The buildings could go up. The chips are ready. The grid is the constraint, and the grid moves on its own schedule.

The Queue Problem

Regional grid operators are drowning. Data centers now account for over 70% of new large-load interconnection requests with regional operators like PJM and ERCOT. PJM, which manages the grid for 65 million people across 13 states and D.C., has seen its interconnection queue explode. ERCOT in Texas is facing similar pressure.

The arithmetic is brutal. In the regions where hyperscalers most want to build (Northern Virginia, central Texas, the Ohio corridor), the grid was designed for gradual industrial growth. It was not designed for individual facilities requesting hundreds of megawatts of power, clustered in the same geographic areas, all arriving within the same few years. When one company's facility would strain the local grid, five companies all filing for the same corridor breaks it.

Chevron is now negotiating to build natural gas plants specifically to power Microsoft data centers in Texas, bypassing the grid entirely. That's not energy strategy. That's an admission that the public grid cannot support what the industry wants to build. The "shadow grid" approach of private power generation is becoming the default plan for hyperscale facilities, and it carries its own problems: permitting timelines, environmental review, and the political backlash I wrote about in The Great Refusal.

The Political Constraint Compounds the Physical One

I counted over 300 data center regulation bills filed in the first six weeks of 2026. Six states with active moratoria. Fourteen states with local construction pauses. Those numbers haven't decreased since I last wrote about them. They've increased.

Virginia, the state with more data centers than any other, is phasing out its data center tax exemption. Georgia's $2.5 billion exemption is under legislative review. The bipartisan consensus isn't "build more" anymore. It's "who's paying for this?"

This matters for the grid story because political and physical constraints reinforce each other. A community that can't get grid capacity for its own growth isn't going to welcome a data center that consumes as much power as a small city. A grid operator dealing with interconnection delays isn't going to fast-track permits when state legislators are filing moratoria. The bottleneck isn't just physical. It's systemic. Political opposition makes grid access harder, which makes construction timelines longer, which gives opposition more time to organize, which makes political opposition stronger.

ITIF published an analysis in April identifying five core concerns about AI data centers and followed it with a companion piece arguing that new data centers won't overwhelm the grid. Both pieces are thoughtful. Both miss the compound effect. It's not about whether the grid can theoretically handle the load. It's about whether it can handle the load on the timeline the industry needs, in the locations the industry wants, while navigating the political environment the industry created.

The answer, increasingly, is no.

The $700 Billion Bet Against Physics

The five largest hyperscalers have committed to $660 to $700 billion in capex for 2026. Nearly double 2025. AI capex now accounts for roughly a fifth of US GDP growth. OpenAI's Abilene, Texas facility under construction will consume 1.2 gigawatts when complete.

Every dollar of that capex assumes the power will be there. The grid is saying it won't. Not "it won't ever," but "it won't on your timeline." And in an industry where inference demand is doubling faster than anyone predicted, a three-year grid delay doesn't just slow deployment. It creates a structural compute deficit that compounds quarterly.

This is the part that should concern anyone thinking about AI infrastructure strategically. The constraint isn't capital. The industry has more capital than it knows what to do with. The constraint isn't chips. NVIDIA is shipping. The constraint isn't demand. Inference volumes are exploding. The constraint is the physical infrastructure that converts electrical energy into computational capacity, and that infrastructure obeys industrial timelines, not software timelines.

What the Grid Constraint Actually Means

If you can't bring enough power to centralized facilities fast enough, you have two options.

Option one: wait. Build the transformers, lay the transmission lines, negotiate the grid connections, fight the political battles, and deploy at scale in three to five years. This is the current default strategy, and it assumes that being three to five years late to the inference market is acceptable.

Option two: distribute the load. Instead of concentrating hundreds of megawatts in single locations, deploy smaller compute across existing infrastructure that already has power, cooling, and connectivity. Cell towers (I wrote about the 6.4 million of them with 70% idle capacity sitting there). Retail locations. Manufacturing floors. Hospital campuses. Telecom edge sites. Places where the grid connection already exists, where the power is already allocated, where adding incremental AI compute doesn't require a new substation.

The economics have shifted to support this. NVIDIA's Jetson Orin Nano Super runs 67 TOPS at $249. Small language models deliver 80-90% of LLM capabilities for the task-specific workloads that constitute the majority of enterprise inference. Google's Gemma 4, released April 2 under Apache 2.0, gives you 2B to 31B parameter models that run on local hardware with no licensing constraints. The technology to distribute inference across thousands of small sites instead of concentrating it in dozens of massive ones is ready.

What's not ready is the orchestration layer. The data pipelines that shape raw sensor data for local models, the management plane that deploys and updates models across heterogeneous edge sites, the observability stack that tells you when site 247 out of 500 is serving stale predictions. I wrote about this missing layer after GTC, and the grid constraint makes it more urgent, not less.

The Signal in the Delay

The grid bottleneck is not a temporary supply chain hiccup. High-voltage electrical infrastructure has multi-year procurement and construction timelines that won't compress because the AI industry needs them to. The political environment is making those timelines longer, not shorter. The demand curve shows no sign of flattening.

This is a structural condition. The centralized compute model is hitting a physical ceiling that capital alone cannot raise fast enough. Every month of grid delay is a month of unmet inference demand, a month of competitive disadvantage for companies waiting in the interconnection queue, a month where the economic argument for distributed computing gets stronger.

The grid said no. The question is whether the industry will keep arguing with physics, or start building for the topology the physical world is demanding.

Want to learn how intelligent data pipelines can reduce your AI costs? Check out Expanso. Or don't. Who am I to tell you what to do.*

NOTE: I'm currently writing a book based on what I have seen about the real-world challenges of data preparation for machine learning, focusing on operational, compliance, and cost. I'd love to hear your thoughts!