RAG Is Read-Only Memory

Andrej Karpathy put a name to the architectural flaw breaking enterprise AI: RAG treats retrieval as understanding and re-derives knowledge from scratch on every query. The missing piece is a compilation step. At personal scale it's a gist on GitHub; at enterprise scale it's an infrastructure layer.

Andrej Karpathy shifted how he uses LLMs. Instead of burning tokens on code generation, he's spending them compiling structured knowledge bases: markdown wikis an LLM builds, links, and maintains from raw source documents. One of his research corpora has grown to roughly 100 articles and 400,000 words that he didn't write a sentence of. Two days later he published a GitHub gist designed to be copy-pasted into your LLM agent so it builds the system for you. Community implementations followed within a week.

The tweet went viral for the obvious reason, which is that it works and it's cheap. But there's a less obvious reason worth paying attention to: Karpathy put a name to the architectural flaw that's been quietly breaking enterprise AI systems for three years. He called the missing piece a "compilation step," and once you see it, you can't unsee it.

The part Karpathy got right

Most production AI systems that consult documents use Retrieval-Augmented Generation. You index a corpus. At query time, the retriever finds the relevant chunks. The model reads them and produces an answer. This works well enough to ship, which is why every "talk to your documents" product on the market is some flavor of it.

What RAG does is treat retrieval as if it were understanding. It isn't. The system discovers knowledge from scratch on every query. Ask it to synthesize five documents and it finds and stitches chunks every time. Ask the same question tomorrow and it does the same work again. Nothing accumulates. Nothing compounds. Anyone who has done serious research with an LLM knows this feeling. You build up a rich, nuanced understanding over a two-hour session, and then the context window fills up or the session ends, and the next time you sit down it's like the model had a lobotomy overnight. If one of the documents you consulted yesterday changed since, the system has no idea; it just returns whatever the most recent index captured, confident and wrong.

Karpathy's alternative adds the step RAG skips. Raw documents live in one directory, immutable. A compiled wiki lives in another, where the LLM maintains summaries, entity pages, backlinks, and an index. A schema file tells the LLM how to operate the wiki: folder structure, citation rules, ingest workflow, linting. When new source material arrives, the model reads it, updates the entity pages, notes where the new data contradicts old claims, and re-knits the backlinks. The knowledge gets compiled once and kept current. Health checks scan the wiki for inconsistencies, missing connections, and stale content. The system heals itself.

This is a compiler architecture applied to knowledge. Raw sources are inputs. Compiled wiki pages are outputs. The schema is the compiler specification. The compiled artifact is more useful than the raw source for most consumers, but you keep the raw source because you need to recompile when inputs change and audit when outputs are wrong. That's the idea. And it's the part the RAG paradigm left out.

Why compilation beats retrieval

Compilation forces the LLM to read, synthesize, and integrate new information into a standing knowledge structure. When something contradicts existing knowledge, the system notices during compilation, not during retrieval. Drift becomes visible at write time rather than failing silently at read time. As one commenter on the gist put it, every proposition should record which source files produced it and their content hashes at compilation time, so that when source files change, the compiled knowledge knows it might be wrong.

I've written about the production version of this before. In "The Missing Part of the Pipeline," I went through why RAG deployments fail in the field: it's almost never bad embeddings. It's stale sources, schema drift, and authority confusion. VentureBeat's analysis of enterprise RAG found the same thing. The retriever returns chunks that were correct when they were indexed and aren't anymore. The system sounds confident because it is: it's confident about the index. The index is confidently wrong.

What Karpathy named is the fix at personal scale. The question for anyone trying to run production AI is what this pattern looks like at the scale of a real enterprise, where "the corpus" isn't one person's folder of PDFs.

The personal wiki is the easy case

Karpathy's setup runs on one laptop. The raw directory is whatever research papers, articles, and notes he drops in. The LLM compiles, he navigates via the index, everything fits comfortably under 400,000 words. At that scale, the DAIR.AI community analysis observed that a well-maintained index file replaces the need for vector search entirely. The LLM reads the index, picks the right page, drills in. No embeddings, no reranking, no infrastructure. A hacky collection of scripts, and it works.

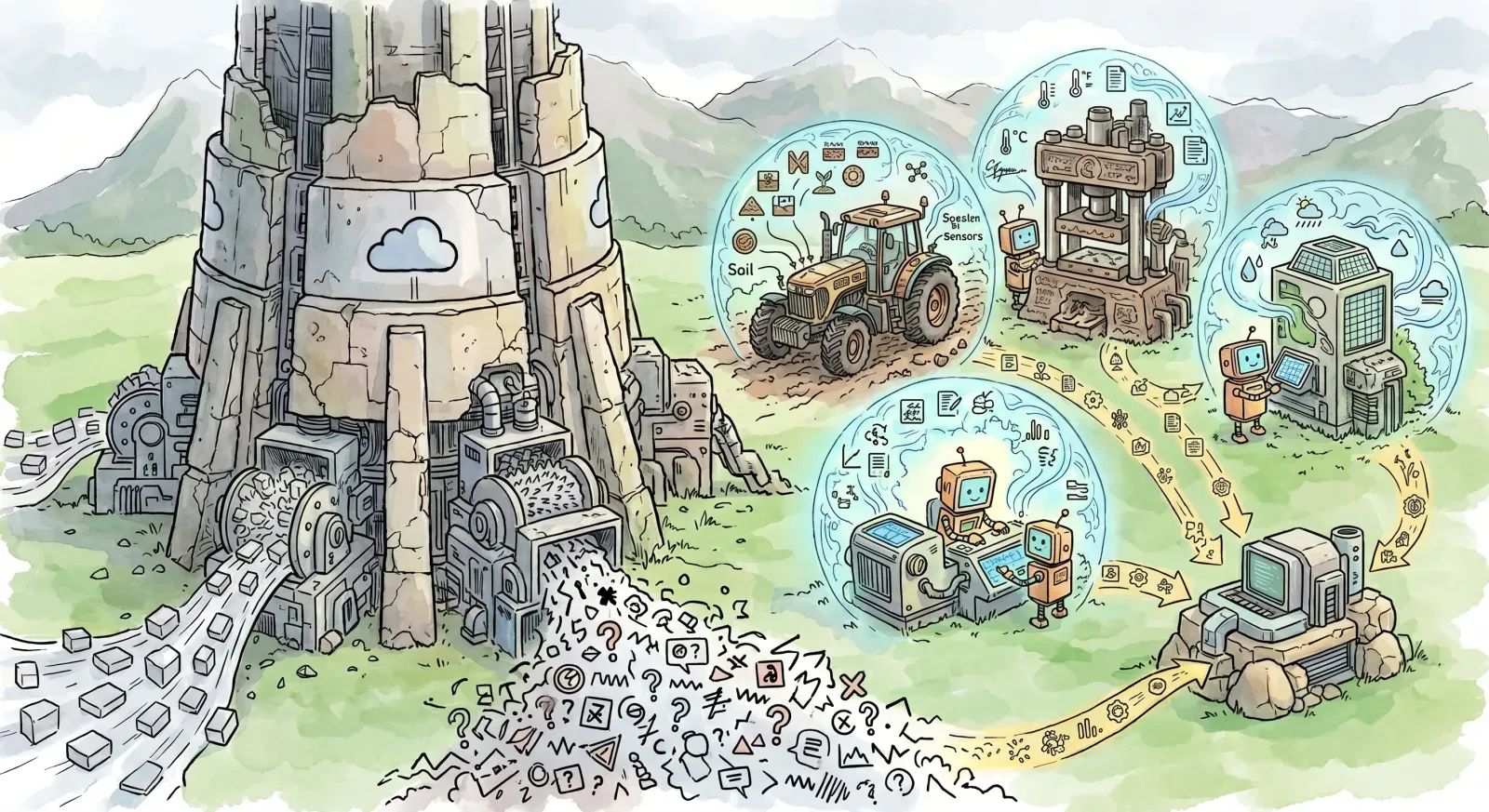

The enterprise version runs on a different planet. The "raw directory" isn't a folder. It's a continuously updated Salesforce instance, a product catalog in an ERP that changes with every inventory shipment, policy documents in a CMS with approval workflows, support tickets arriving by the thousand per day, and external regulatory feeds that update whenever some committee in Brussels publishes a new guidance document. The corpus is larger than any context window can navigate. The rate of change is higher than any periodic rebuild can absorb. Authority is contested: the official return policy document and the support agent's informal knowledge article say different things, and the system has to know which one wins.

If you try to run Karpathy's script against that environment, it catches fire within an hour. Not because the pattern is wrong. The pattern is exactly right. The pattern needs infrastructure that doesn't exist yet.

What the distributed version actually requires

The infrastructure question is where the real work lives, and where the authoritative answer is mostly missing from the discourse.

Start with when to recompile. The personal wiki recompiles whenever Karpathy runs the script. That's fine for one person. In production you have three options, all with real tradeoffs. Event-driven recompilation fires when a source system announces a change. It's ideal in theory, a nightmare in practice: most enterprise source systems don't emit reliable change events, and the ones that do tend to fire hundreds of events for what amounts to a single logical update. Scheduled recompilation runs on a cadence. Cheap and predictable, but every wiki page now has a staleness window equal to the schedule interval. Hybrid is where most production systems will land: high-authority sources get event-driven recompilation, everything else runs on a schedule, and a continuous staleness check flags anything that's drifted past its freshness SLO.

Then there's the cost side. Recompiling a 100-article wiki is cheap. Recompiling a ten-million-document enterprise corpus every time a single source changes is financially absurd. The compilation step has to be incremental. Production RAG systems already do this at the document level with content hashing and differential re-indexing; compilation has to do it at the claim level. When source X changes, only the wiki pages that cite source X get recompiled, along with any pages that backlink to them. This is the part nobody has built yet at enterprise scale, because it requires the compiled knowledge to track provenance at proposition-level granularity, not document-level. Every claim in every compiled page needs to know which raw sources produced it, so the compiler can work out the blast radius of any given source change.

Which is where provenance comes in, and where the infrastructure layer gets heavy. I've written about this as a Data Bill of Materials: SLSA-style attestations applied to data rather than software builds. Every compiled artifact carries a signed manifest of which sources, at which versions, with which content hashes, produced which claims. When a downstream AI system serves a wrong answer, you can trace it back through the manifest to the specific source record that caused the failure. Production RAG systems don't have this, because the retrieval step obliterates the link between the returned chunk and the rest of the corpus. Compiled knowledge preserves it by construction, as long as you build the manifest as part of the compile.

Then there's where compilation runs. The personal wiki lives on one laptop because one person's corpus fits on one laptop. Enterprise data doesn't. When 75% of enterprise data lives outside traditional data centers, the compiler can't be a centralized script. It has to run where the data lives, which means it's a distributed system. The compiled wiki pages can aggregate upward to a central index, but the compilation itself has to meet the data. This is not an exotic requirement. It's what every competent data pipeline has been doing for a decade. The difference is that now the pipeline's output is a knowledge graph an LLM reads, rather than a dashboard a human reads.

Authority is the final layer, and the one that makes enterprise compilation meaningfully harder than personal. In Karpathy's wiki, every source is trustworthy because he curated them. In an enterprise, sources have stratified authority. Legal's approved policy document outranks a product manager's draft memo. The compiler needs explicit authority rankings so that when sources conflict, the compilation step knows which one wins, and the wiki page records both the winning claim and the losing one, so auditors can see what got overruled and why.

None of this is speculative. The underlying capabilities exist as discrete components in enterprise data stacks already. What doesn't exist is the integrated layer that combines them into Karpathy's compilation step at distributed scale.

Fine-tuning lives in a different quadrant

The other architecture worth contrasting is fine-tuning. If the problem is "get the model to know my data," an adjacent answer is to bake the data into the weights. It's a real option and it has real advantages: lower inference cost, no runtime retrieval dependency, good latency.

The trade that fine-tuning makes is locking knowledge into the model. Update a policy document and the model doesn't know. Correct an error in a source record and the model doesn't know. Get a regulatory update and the model doesn't know until the next training run. Fine-tuning is the right architecture for stable, slow-moving domains where the knowledge is authoritative at training time and stays authoritative for months. It's the wrong architecture for anything that changes on the operational tempo of the business.

Compilation sits in the opposite quadrant. Higher inference cost because the model reads the compiled wiki at runtime. Lower knowledge-staleness cost because the wiki updates as sources update, which matters more than most teams account for. The right choice for domains where the knowledge is dynamic, governed, or contested. Which is most of what enterprises actually care about.

Fine-tuning and compilation aren't competing technologies. They're complementary. The model handles reasoning patterns and capabilities. The compiled wiki handles current facts and the authority graph. A well-designed system uses fine-tuning for the first and compilation for the second, instead of smashing them together into a single fine-tune-everything pipeline that decays the moment a policy changes.

The product nobody's built

Karpathy called his setup "a hacky collection of scripts." When an entrepreneur responded that "every business has a raw/ directory and nobody's ever compiled it," Karpathy agreed and suggested the distributed version could be "an incredible new product" category.

He's right. The compiled knowledge base, continuously maintained with provenance tracking and distributed across wherever data lives, is the missing infrastructure layer between "AI that works in demos" and "AI that works in production." The industry spent three years optimizing RAG: better chunking, better embeddings, better rerankers. All of that work matters. None of it addresses the fundamental problem, which is that retrieval without compilation is stateless. It re-derives knowledge from scratch on every query. It can't tell you whether its sources have changed since they were last indexed. It has no mechanism for catching contradictions between sources before a wrong answer ships.

Karpathy built the prototype on his laptop. The question is who builds the distributed version.

Want to learn how intelligent data pipelines can reduce your AI costs? Check out Expanso. Or don't. Who am I to tell you what to do.

NOTE: I'm currently writing a book based on what I have seen about the real-world challenges of data preparation for machine learning, focusing on operational, compliance, and cost. I'd love to hear your thoughts!