Agents Don't Live in Data Centers

Agentic AI is the first workload where the physics of latency and data locality aren't optional. The $700B centralized infrastructure bet was built for a different kind of AI.

Here is the question quietly making the rounds in strategy decks since the Goldman note leaked: what if we built the wrong thing?

That Goldman Sachs internal research note said something nobody on stage had quite said out loud: roughly $400 billion spent on AI infrastructure globally in 2025, and the revenue, margin, and competitive impact remain... unproven. Market re-priced. Hyperscalers paused. The question landed.

I ran ML infra at Google and at Microsoft. I have watched a lot of "we are building the wrong thing" panics. Most of them are wrong about being wrong. This one is closer to right than I would like.

The bet itself is staggering. Futurum Group estimates hyperscaler capex sprints toward $700B annually in 2026. Civilizational-scale wager on a single thesis: AI lives in centralized data centers, served via cloud, delivered over the public internet.

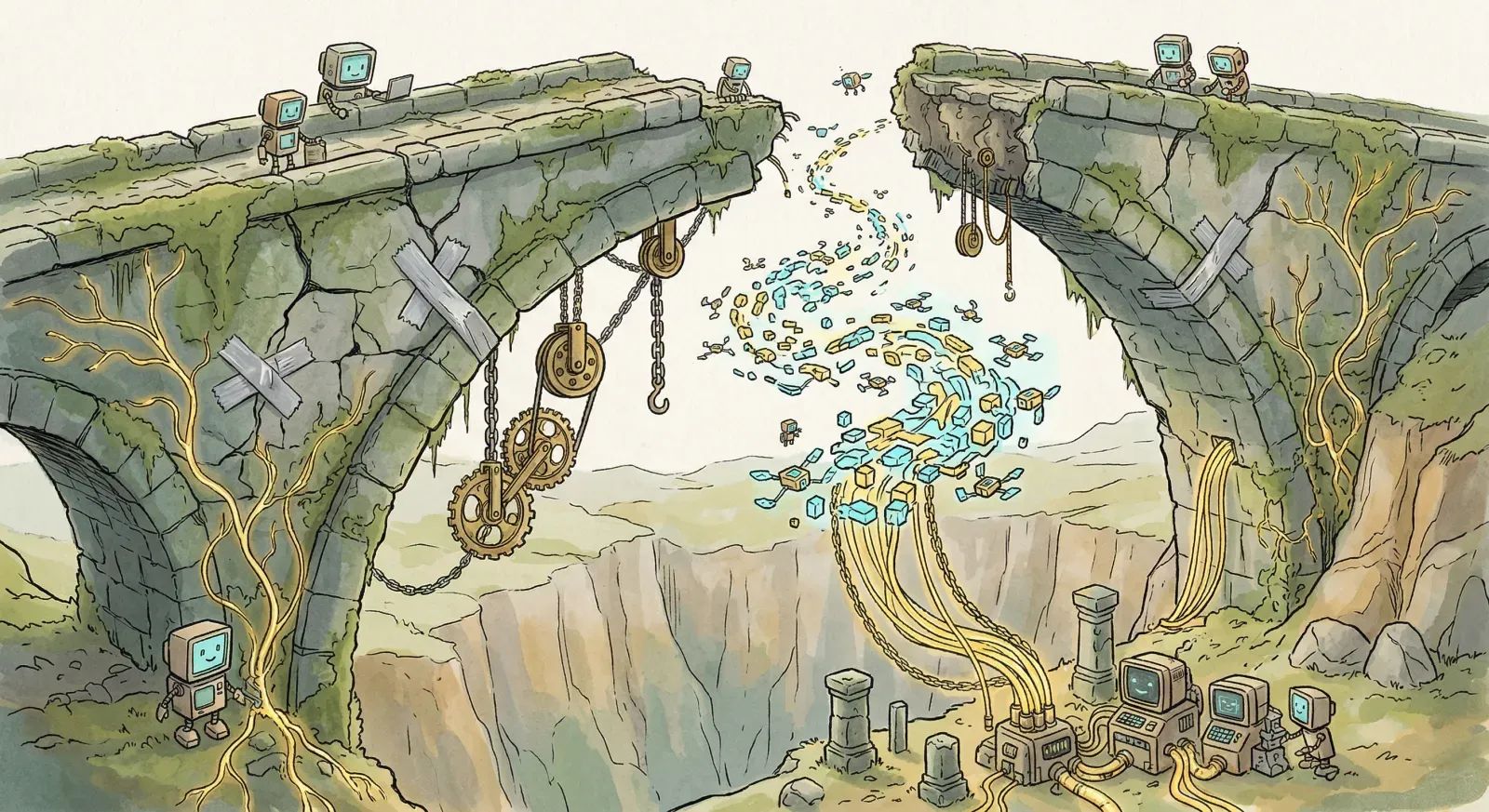

Agents break that thesis. Not in some abstract way. Just. They do.

A chatbot is forgiving. You ask a question, a few hundred extra milliseconds while the token stream gets your answer back is a nuisance — you are waiting. Agents do not wait. They orchestrate. They decide. They drive devices. They coordinate with other agents. And the moment a round trip back to a data center stretches past the loop budget, the agent either makes a bad call or makes no call at all. Neither is great.

SiliconANGLE reported earlier this month on what is happening in carrier-class deployments: you cannot just bolt an agent onto your existing cloud stack and expect it to work at scale. Agents need local compute. They need peer-to-peer coordination that does not call home. They need decision loops in the tens of milliseconds. They need to run across thousands of distributed sites at once. None of those properties live anywhere near an Ashburn campus.

That is the place where the Goldman note connects to the buildout. The capex assumed the future would rhyme with the past. Bigger clouds. Fancier models. More racks. But once "just send it to the cloud" stops working — and for agents it stops working — the entire cost structure, deployment model, and compute strategy have to move with it.

Ciena has a piece making the case that networks have to evolve from "passive interconnects" into programmable infrastructure. That is consultant-language for: the network itself becomes part of the compute layer. Intelligence cannot sit only at the edge. Cannot sit only in the cloud. It has to be distributed, and the network has to decide which slice runs where based on latency, cost, and reliability. (Easy to say. Hard to ship. Source: have shipped some of it.)

AT&T's CTO cast doubt on pure far-edge compute in a recent interview, and he is right to be skeptical. Agents are not purely local either. The myth that breaks is the other one — that all agents work best in the cloud and the edge is glorified caching. The truth is messier. Parts of the decision loop run locally. Parts coordinate with peers at regional aggregation. Parts reach back to centralized training or human review. It is a spectrum. Pretending otherwise is how you ship a system that hits a SEV-1 the day a customer's WAN gets flaky.

Dell's edge-AI predictions capture the operational shape. Spectro Cloud's enterprise survey tracks how organizations are re-thinking topology. ZEDEDA on industrial operations shows the pattern clearest. As autonomous systems take on more responsibility in manufacturing, logistics, and infrastructure, you stop being able to route every decision through a central brain. A defect-detection robot does not wait for a network round-trip. A traffic system coordinating thousands of intersections does not wait either. They simply cannot.

The hardware is chasing the thesis. Lenovo's ThinkEdge SE60n Gen 2 — Intel Core Ultra, up to 97 TOPS with integrated AI accelerators — hit select markets this month. Aitech is showing space-qualified edge compute at the Space Symposium. Distributed autonomous compute in orbit, which I cannot believe is a real product I get to mention. These are commercial platforms shipping into use, not lab demos.

Tie this back to the $400B. If agents need distributed intelligence, the market cap wipeout is not just an ROI story. It is a topology story. Deploy the same compute across thousands of smaller distributed systems and you get better characteristics for agentic workloads. Lower latency. Higher availability. And once you back out the cloud-margin tax and the network egress charges, the total cost is plausibly lower too.

This is why the Goldman note landed the way it did. The hyperscalers did not overestimate chatbot demand. The architecture for deploying AI is shifting under them, and the 2024 infrastructure calls might not match what 2027 actually needs.

Centralized infrastructure does not vanish. Training, archival, batch workloads where latency genuinely does not matter — that stays. But for the workloads that change how systems operate (autonomous decision-making, real-time coordination, distributed reasoning across thousands of sites), cloud-first stops being a feature. It becomes a ceiling you cannot punch through with another billion in capex.

Deep Engineering's analysis puts it bluntly: the edge stopped being the margin. It is becoming the center of gravity for deployed AI. That reverses what the last decade of infrastructure spend assumed.

The vendors who saw it first are repositioning. System resilience players are pushing fault tolerance across distributed agent fleets, because the real failure modes show up when the network drops out mid-decision. SiliconANGLE's GTC coverage catches the emerging consensus on the show floor. The hard problems are not "more TFLOPs per dollar." They are: coordinate autonomous systems that lose network connectivity. Make independent decisions without a central validator. Converge on consistent outcomes when communication is intermittent. Distributed systems people have been working on these problems for thirty years. The AI world is catching up.

This is, fundamentally, a systems problem with an AI hat on top. Distribution has to be in the foundation. Not bolted onto a cloud architecture drawn for a different workload. Not added in v2.

The $400B that has not delivered impact did not fail because the models are bad. It was deployed against the wrong topology. The fix is not bigger data centers. It is smaller ones — distributed widely, networked properly, coordinated by the agents themselves.

The agents are not coming to the data centers. The data centers have to go to the agents.

Want to learn how intelligent data pipelines can reduce your AI costs? Check out Expanso. Or don't. Who am I to tell you what to do.*

NOTE: I'm currently writing a book based on what I have seen about the real-world challenges of data preparation for machine learning, focusing on operational, compliance, and cost. I'd love to hear your thoughts!