The Permission Problem

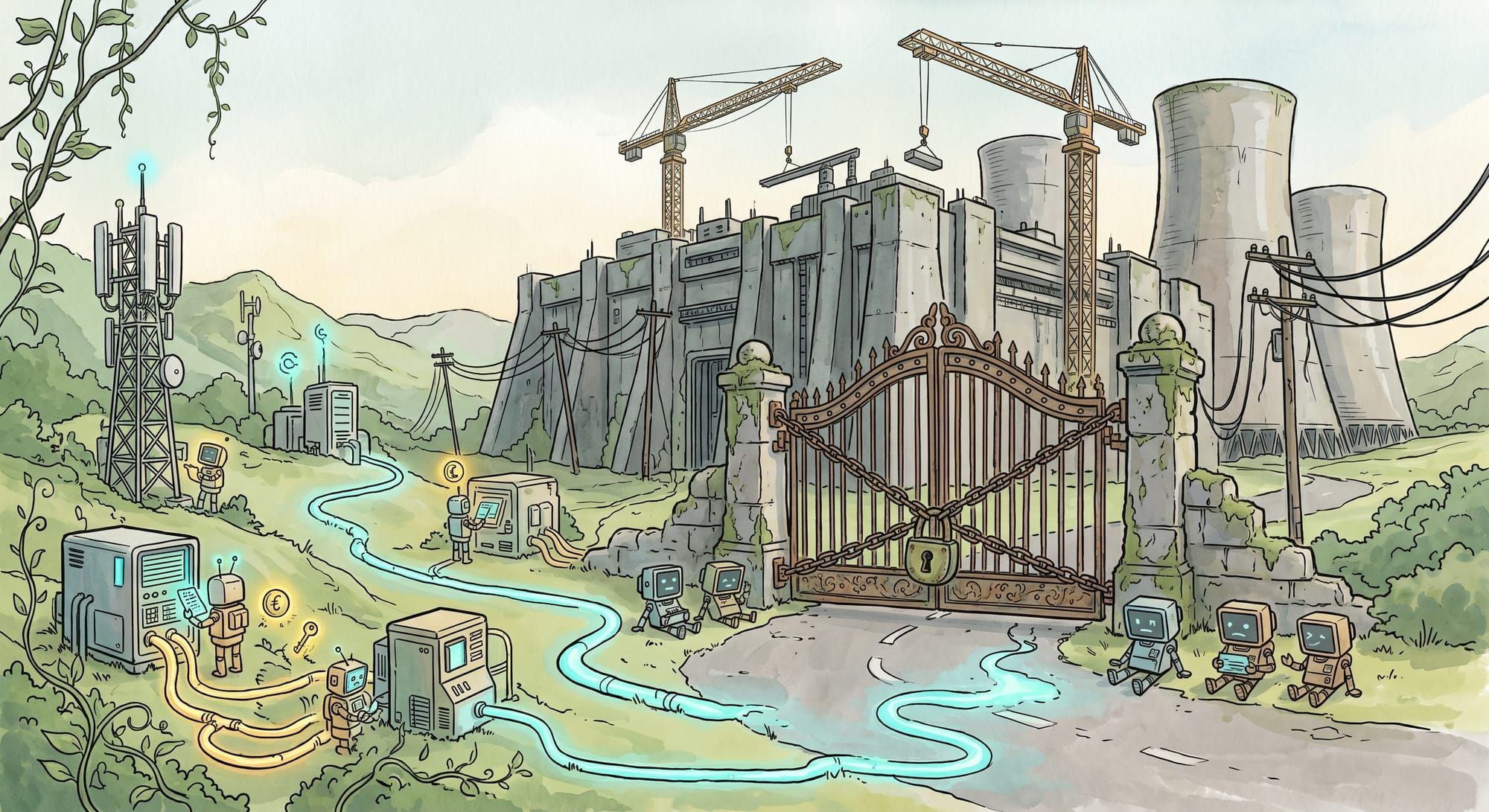

Loudoun County, Virginia, was the most permissive jurisdiction in the United States for hyperscale data center construction. As of this quarter, it has nearly flipped 180 degrees. Is this the bellweather?

Let's talk about Loudoun County, Virginia.

If you don't work in infrastructure, you've never heard of it. If you do, this is THE place. Somewhere between 60% and 70% of the world's internet traffic routes through facilities sitting in this one Northern Virginia county, and for most of the last decade Loudoun was also the most permissive jurisdiction in the country for hyperscale construction. The Board of Supervisors approved campuses on what was effectively a rubber-stamp cadence, the substation costs got socialized into the broader Dominion Energy rate base, the county collected the property tax revenue, and most of the AI buildout from 2022 through 2024 was financed against the assumption that Loudoun was the median, not the outlier.

This quarter, Loudoun has more active moratorium proposals on its docket than any comparable jurisdiction in the country. That reversal happened in eighteen months. It is happening for reasons that are going to keep happening, in places nobody had on their 2026 risk register.

The bottleneck nobody priced into a 2024 financial model was never going to be technical, even though most of the discourse acted like it would be. Compute is fine, NVIDIA shipped on time, the networking gear arrived, the cooling works in the lab and at scale, and not one of those things is what is going to keep your 2026 workload from coming online. The thing that is going to keep it from coming online is whether a county commissioner, a state public utility commission, or a regional grid operator will let you turn the racks on in the calendar year you actually need them turned on. That answer is moving in real time, and it is moving in the wrong direction for the buildout that just got financed.

A 2 GW campus is a financeable thing on paper. It is a five-year fight in dirt, and the fight is what is changing.

The grid-skeptics had the diagnosis right

The folks who have spent the last two years warning that centralized AI was going to slam into grid limits got the diagnosis exactly right, and at this point I would just like to say sorry to anyone I argued with about it in 2024. Data centers now account for roughly half of all new US electricity demand. Global AI-related electricity consumption is on track for around 1,000 TWh by the end of 2026, which is a midsize industrialized country's worth of electricity. Treating any of this as a footnote, which most of the industry did until about a year ago, was a category error.

But then the same crowd keeps handing me a prescription that does not survive contact with the actual political-economy environment, and that is where the conversation falls apart.

Will isn't the problem. Coalition is.

The fix-the-grid argument assumes that the engineering exists, the financing exists, and the only thing missing is political will. That is half right, in the way that "all I need to climb Everest is a good attitude" is half right. The engineering is mature, DOE has the modernization roadmap, FERC has the interconnection reform proposal, the transmission corridors are mapped, the storage technology is production-ready, demand-response is running in pilot, and none of this is mysterious. What is actually missing is not will. It is coalition, and coalition is the thing that does not show up in an engineering roadmap.

Transmission siting is a state-level fight in most US jurisdictions, and most of the relevant states do not have a clean coalition through it. New generation siting requires winning a NIMBY fight, an environmental review, in a lot of places a tribal sovereignty fight, and increasingly a ratepayer revolt, all stacked on the same project. Substation siting plays out at the county and municipal level, where the constituency that benefits from a hyperscale data center load (a hyperscaler in another time zone) has roughly zero votes in the relevant elections, and the constituency that absorbs the cost (the residential ratepayer, the school district worried about its assessment, the homeowner two miles upwind) has roughly all of them. That is not a failure of imagination. It is a vote count.

I have watched a lot of my friends in this industry get frustrated with voters about this, which I understand and which I also think is short-sighted. The voters are not being unreasonable. They are being asked to underwrite a buildout whose direct cost they pay, whose direct benefit they do not see, and whose externalities (the noise, the water, the visual blight, the higher bills) they live next to. Of course they are saying no. The surprising thing is not that they finally noticed, it is that we expected them not to.

State PUCs noticed. Several have moved hyperscale data center loads into separate rate classes specifically to insulate residential ratepayers from the cost of expansion, and almost no AI press picked it up, which is a miss because once the rate base splits, the financing model that made hyperscale campuses cheap collapses, the hurdle rate goes up, and the build cadence slows. None of this is anyone being unreasonable. It is the cost of asking anyone to be reasonable getting priced in.

"But hyperscalers will scale through it." Are you sure?

The other prescription, the one coming out of the labs and the hyperscalers themselves, is that announced capacity will roughly track delivered capacity, the way it did in 2018 and 2019 and 2020 and 2021. That was a defensible extrapolation through the end of 2024, stopped working visibly in 2025, and is now in open contradiction with the data.

Loudoun is not a one-off. Suburban Phoenix and several Texas exurbs have either passed moratoria or surfaced moratorium proposals into active hearings, and Northern Virginia substation projects that were routine approvals five years ago are now multi-year political fights. Two stories from the last couple weeks tipped me off that the political turn just crossed a threshold I had not seen yet. CNN ran a piece on April 23 titled "There are fixes for AI's toll on the power grid. Here's why they're not happening." Three days earlier, Fortune published polling showing Americans now associate AI infrastructure with rising electricity bills and have soured on AI as a category as a result. Read the bylines. Notice where those pieces ran, because CNN and Fortune are not Common Dreams, and the political turn against centralized AI just migrated out of the activist fringe and into the centrist business and political press, which is the part of the spectrum that most reliably foreshadows where actual zoning votes are going to land in the next election cycle.

So which side is right? Both, kind of, and also neither in the way that actually matters for the next twenty-four months. The fix-the-grid argument resolves on a presidential-term timeline. The hyperscaler-scale-out argument is hitting the wall right now. The actual workload, the stuff being trained and served and shipped by product teams who do not care about either argument, has to run somewhere, and where it runs is wherever the substation is already humming.

Telco edge: the buildout that already happened

There is already a huge pile of capacity sitting in places nobody is having moratorium fights about. Cell tower compounds. Regional colo footprints. Retired industrial facilities with their substation tie-ins still intact. Telco central offices that were sized in 1996 for a voice and broadband workload that has since contracted by an order of magnitude. The substations are paid for, the easements are paid for, the cooling is roughly fine, and the community license, which is the hard part of all of this, was granted thirty years ago by a public that has long since moved on to caring about other things.

NVIDIA put a number on this at GTC in March: roughly 100,000 telco data center sites globally, with 100 GW of spare capacity already energized. HPE's distributed AI factory rollout approaches the same problem from the enterprise side. The two of them are converging on the answer that has been sitting there the whole time, which the discourse has been politely ignoring because it is not as exciting as building a new 2 GW campus in cornfield Wisconsin.

There is a reflex in this industry, especially among people who learned their economics on the AWS scale curve, to dismiss the whole telco-edge category as too small, too inefficient, too logistically annoying to be the actual answer. That dismissal was defensible when the hyperscale alternative was clearing on a four-year cadence. It is not defensible when the alternative is a 2028 FERC study cycle and a Loudoun moratorium hearing on the same docket. A workload that runs at slightly worse unit economics on permitted infrastructure beats a workload that does not run at all, and the economics that looked bad against a 2024 spreadsheet look fine the moment the comparison case becomes zero.

I made the structural case for this in Six Million Cell Towers Walk Into a Data Center, and the incumbents have started making the argument for me, which has historically been the moment a position quietly graduates from contrarian to obvious.

So what do you actually do about any of this

For three years the AI infrastructure debate ran at the engineering layer, in some combination of NVIDIA versus AMD, hyperscaler versus neocloud, and centralized versus federated, in roughly that chronological order. None of those framings was wrong on its own terms. They were operating one layer above the constraint that is now actually binding.

The constraint that is now actually binding is whether your load gets to come online in the calendar year you need it, and the answer is unevenly distributed across US geography in ways the planning process has not caught up to. Some sites have the permission. Most do not. The architecture that wins the next two years is the one that pays attention to which is which and routes the workload accordingly.

If your 2026 inference is sitting in a FERC interconnection queue with a 2029 study cycle, that capacity does not exist for you, and the same is true if your training run is waiting on a substation transformer with an 18-month lead time. Anything else you have planned that depends on Loudoun voting yes is on a similar timeline, and the timeline is worse than your roadmap admits.

Loudoun is not voting yes.

Want to learn how intelligent data pipelines can reduce your AI costs? Check out Expanso. Or don't. Who am I to tell you what to do.

NOTE: I'm currently writing a book based on what I have seen about the real-world challenges of data preparation for machine learning, focusing on operational, compliance, and cost. I'd love to hear your thoughts!