The Only Guarantee Is Your Catalog Will Be Wrong. Eventually.

The industry has converged on the right diagnosis: metadata is the substrate for enterprise AI. Every productized prescription is wrong in the same way. Catalogs run after the data has already moved, which is why every catalog you have ever seen is wrong, and why the only question is how fast.

In the last thirty days, the enterprise data category did something it has not done in a decade. It agreed with itself.

IBM closed the $11 billion Confluent acquisition on March 17, framing the deal explicitly as making real-time data the engine of enterprise AI agents, with lineage, policy enforcement, and quality controls bundled into the streaming primitive. Equinix launched Fabric Intelligence on April 15 as an AI-native operational layer for the network. MIT Technology Review published a piece on April 22 arguing that AI cannot deliver business value without a strong data fabric. Microsoft repositioned Fabric as an MCP-driven agentic operating system. Atlan was named a Leader in the 2026 Gartner Magic Quadrant for Data and Analytics Governance. The diagnosis is finally consensus: metadata is the substrate, and AI without it produces confidently wrong answers.

The diagnosis is correct. The prescription is wrong, and it is wrong in the same way across nearly every productized version of it.

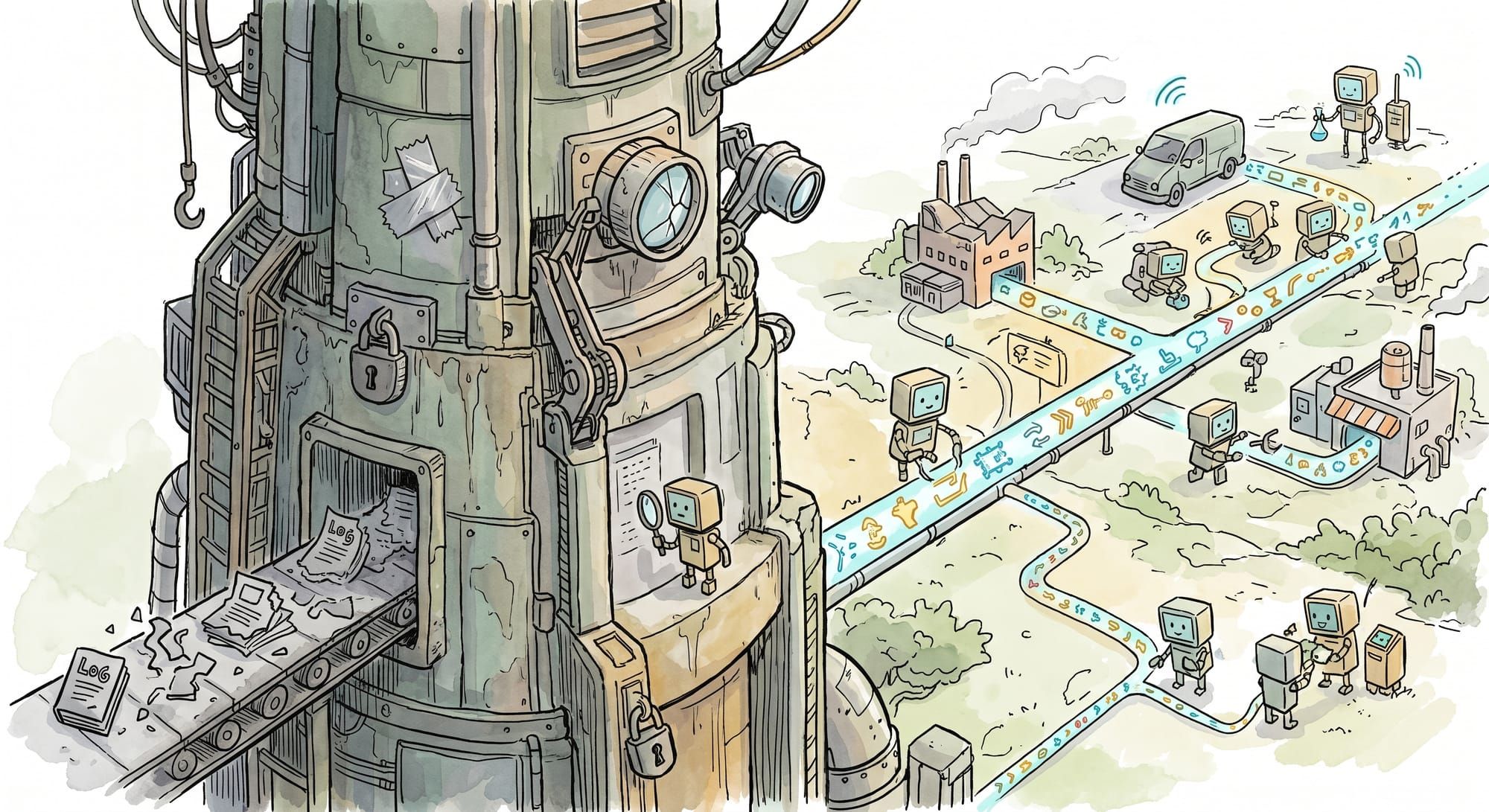

Every one of these systems is some flavor of catalog-after-the-fact. The data moves through the pipeline. The pipeline emits artifacts. Sometime later, a catalog crawls the artifacts, parses logs, scrapes lineage from query histories, and reconstructs a model of what just happened. By the time it finishes, the source context that produced the data is gone. The catalog records what was true on the day it indexed. That is also the day the catalog starts decaying.

This is why every enterprise data catalog you have ever seen is wrong. Not eventually wrong. Currently wrong. The only question is how wrong, and how fast it gets wronger.

The crawl can't outrun the source

Schemas drift. Sources move. The team that owned the upstream Salesforce report leaves and the new team renames three columns. A regulatory feed updates its document IDs. A SaaS vendor changes its API response shape in a minor version bump. The marketing team starts loading data from a new ad platform and nobody tells the data team. None of these events are unusual. All of them happen monthly in a normal enterprise.

In a wrap-at-ingest world, each of those events causes a metadata change to ride alongside the data through the pipeline. In a catalog-after-the-fact world, each of those events is invisible until the next crawl, and the crawl might miss it entirely if the lineage chain depends on log parsing that the source change happened to break.

The structural problem is not that catalogs are bad software. Atlan, Collibra, DataHub, and the rest are competently built and serve real purposes. The structural problem is topology. A catalog runs centralized, after the data has already moved, looking backward through artifacts that have lost their context. The only thing the catalog has to work with is whatever the pipeline thought to log on the way out. In most pipelines, that is not very much.

Gartner's framing, that 60% of AI projects through 2026 will be abandoned for lack of AI-ready data and coherent governance, captures the operational consequence. The typical enterprise has context scattered across 8 to 12 disconnected tools. The catalog is supposed to unify that context. What it actually does is unify a stale snapshot of that context, refreshed on a cadence that is always too slow for the rate at which sources actually change. AI agents reading from that catalog produce confident, sourced, completely wrong answers. The retrieval looks rigorous. The substrate it is reading was already wrong by the time the catalog finished crawling.

Wrap the row, not the report

The fix is not a better catalog. The fix is a different topology.

In a wrap-at-ingest pipeline, the unit of data moving through the system is not a row. It is a row plus a signed manifest of where the row came from, when it was ingested, what its source authority is, what content hash the source had at ingest time, what transformations have been applied, and what claims this row contributes to. The manifest is part of the artifact. Every downstream join, aggregation, model invocation, and retrieval inherits it for free, because it travels with the data.

This is not theoretical. The SLSA specification defines exactly this primitive for software builds: every artifact carries a signed attestation of which sources, at which versions, with which build steps, produced it. When a downstream vulnerability shows up, you can trace it back to the specific source commit that introduced it. The category name in the data world is the Data Bill of Materials, and a handful of teams are quietly building the components, but no one has assembled the integrated layer yet at production scale.

The hard part is granularity. A document-level provenance record is not enough. When a source changes, you need to know which claims depended on it, not just which documents referenced it. That requires the wrap step to track provenance at the row level, with each row carrying a manifest of which sources, which versions, and which authority rankings produced it. When the source changes, the system can compute the blast radius (which claims need to be re-derived, which downstream models need to be re-run, which dashboards are now suspect) without scanning the entire pipeline. I wrote about the failure mode this prevents in The Missing Part of the Pipeline, and again in The Time Value of Data. The catalog-after-the-fact topology cannot prevent it, because by the time the catalog notices the source has changed, the wrong answers have already been served.

Why this can only run distributed

There is a temptation to look at wrap-at-ingest and conclude it is a centralized data-fabric problem. Run a big platform. Stream everything through it. Wrap on the way through. This is roughly the Microsoft Fabric pitch coming out of FabCon 2026, and it is a real and useful thing. It is also insufficient.

The reason is that most enterprise data does not live where the centralized fabric runs. Roughly 75% of enterprise data is generated outside traditional data centers, in factories, retail locations, vehicles, edge gateways, OT networks, and customer endpoints. By the time that data has been streamed to a centralized fabric to be wrapped, the source context that the wrap was supposed to capture is already partial. You can wrap whatever metadata survived the trip. You cannot wrap the metadata that was lost in flight.

Wrap-at-ingest has to run where the data is born, which means it has to run distributed. The agent that ingests the source has to be the agent that signs the manifest, because it is the only agent in the pipeline that has direct access to the source's actual state at the moment of ingest. Once the data has hopped through a centralized stream processor, that direct access is gone, and the manifest is, at best, a recreation. The compiled metadata can aggregate upward, into a central index, a global catalog, or a federated query layer, but the compilation step itself must meet the data. This is the same architectural pattern every competent distributed pipeline has been doing for a decade. The only thing changing is what the pipeline emits. It used to emit dashboards. Now it has to emit a manifest an LLM can read.

The incumbents are making the argument

IBM's framing of the Confluent close is closer to the structural answer than any of the pure-catalog vendors. "Lineage, policy enforcement, and quality controls included" alongside the streaming primitive is the right instinct. Bundle the metadata with the moment of ingest. Don't make the catalog catch up later.

What IBM has not yet built, and what no one has yet built end to end, is the part that makes the bundle ride with the data forever afterward, through every join, every aggregation, every model that consumes it, with claim-level granularity preserved through every transform. The streaming layer is the entrance. The substrate has to extend through the entire pipeline, all the way to the LLM that reads a claim and either trusts it because the manifest checks out or flags it because the source has changed since the claim was last derived. Streaming is necessary. It is not sufficient.

Equinix's Fabric Intelligence rollout is making a similar argument from the network layer. So is Scale's "AI-Native Data Layer" framing. I have written before about the moment when the incumbents make your argument. This is another one. The diagnosis is right. The prescriptions stop one step short of where the architecture has to go.

The thing that compounds

The reason this matters is that almost nothing in the AI stack actually compounds.

Models do not compound. The current frontier model is a depreciating asset; the next one will replace it within a year. Datasets do not compound; their relevance decays as the world moves on. Context windows do not compound, because they are reconstructed from scratch on every query. Embeddings do not compound; they get re-derived when the embedding model changes. Even the painstakingly built RAG indices that companies have spent two years optimizing do not compound; they are exactly as fresh as their last crawl, and as accurate as the source context they had at crawl time.

Provenance compounds. Every wrapped artifact you produce inherits the wrap of the artifacts it was derived from. The manifest grows richer with every transform. The blast-radius computation gets more precise as the lineage graph deepens. The audit trail extends without anyone having to manufacture it. Every claim an agent can prove is a claim a regulator, an auditor, or a customer can verify. Every claim it cannot prove is a claim that should not have been made in the first place.

Catalogs run after the data has moved, and they decay against a moving target. Wrapped metadata runs with the data, and it accumulates against the same target. The asymmetry is structural, not preferential. One of these two architectures works at enterprise scale and the other one keeps producing the failure mode Gartner is measuring.

The boring true thing is this. Your catalog will be wrong. The only question is whether you are building infrastructure that depends on the catalog being right, or infrastructure that does not need the catalog to be right because the metadata is already where it needs to be.

The only guarantee is that your catalog will be wrong. Eventually. Build for that.

Want to learn how intelligent data pipelines can reduce your AI costs? Check out Expanso. Or don't. Who am I to tell you what to do.

NOTE: I'm currently writing a book based on what I have seen about the real-world challenges of data preparation for machine learning, focusing on operational, compliance, and cost. I'd love to hear your thoughts!